In modern web development, we're witnessing the rise of a new power couple: live data APIs and generative AI. Separately, they are transformative. Together, they represent a paradigm shift, enabling developers to build applications that are not just functional, but dynamic, context-aware, and visually unique. This isn't just theory; it's a practical, hands-on revolution in how we create digital experiences, a shift that is comprehensively explored within the broader context of Google DeepMind's Anti-Gravity AI development environment.

The Modern Power Couple: Why Every Developer Should Combine APIs and AI

For years, developers have relied on APIs to pull in everything from stock prices to social media feeds. This is the lifeblood of the dynamic web. On the other side of the spectrum, generative AI has emerged as a boundless well of creativity, capable of producing images, text, and even code on demand. The real magic, however, happens when you build a bridge between the two. This is precisely how we're accelerating development with parallel AI agents, making these integrations even more powerful.

Beyond Static Content: The Magic of Live Data via APIs

An Application Programming Interface (API) is your application's window to the world. It allows your code to request and receive up-to-the-minute information from external services. This means your app can react to real-world events, providing users with timely and relevant content instead of being stuck with static, pre-packaged information.

Your Personal Art Department: What Are AI-Generated Assets?

Think of generative AI models like DALL-E or Stable Diffusion as an art department at your beck and call. By providing a simple text prompt, you can generate custom icons, logos, banners, and illustrations in seconds. This eliminates the need for generic stock photos or time-consuming design work, allowing for truly bespoke visual identities.

The Synergy: Creating Richer, Context-Aware User Experiences

When you combine a live data feed with an AI asset generator, you create a powerful feedback loop. The data provides the context, and the AI provides the creative output based on that context. Imagine a travel app that doesn't just show you the weather in Paris, but generates a beautiful, unique icon of the Eiffel Tower under a sunny sky or gentle rain. This active partnership between developers and AI is a prime example of Human-AI Collaboration in action, a future we're building today.

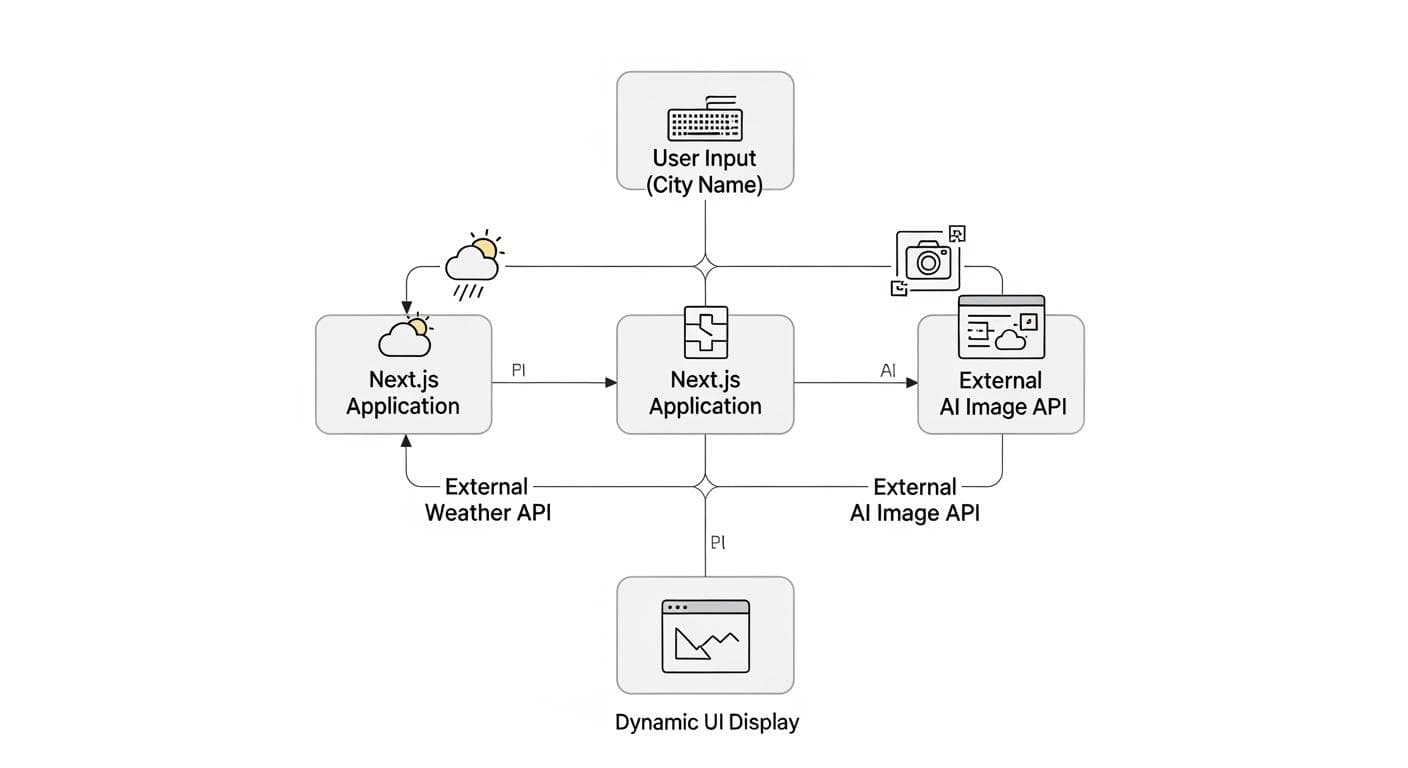

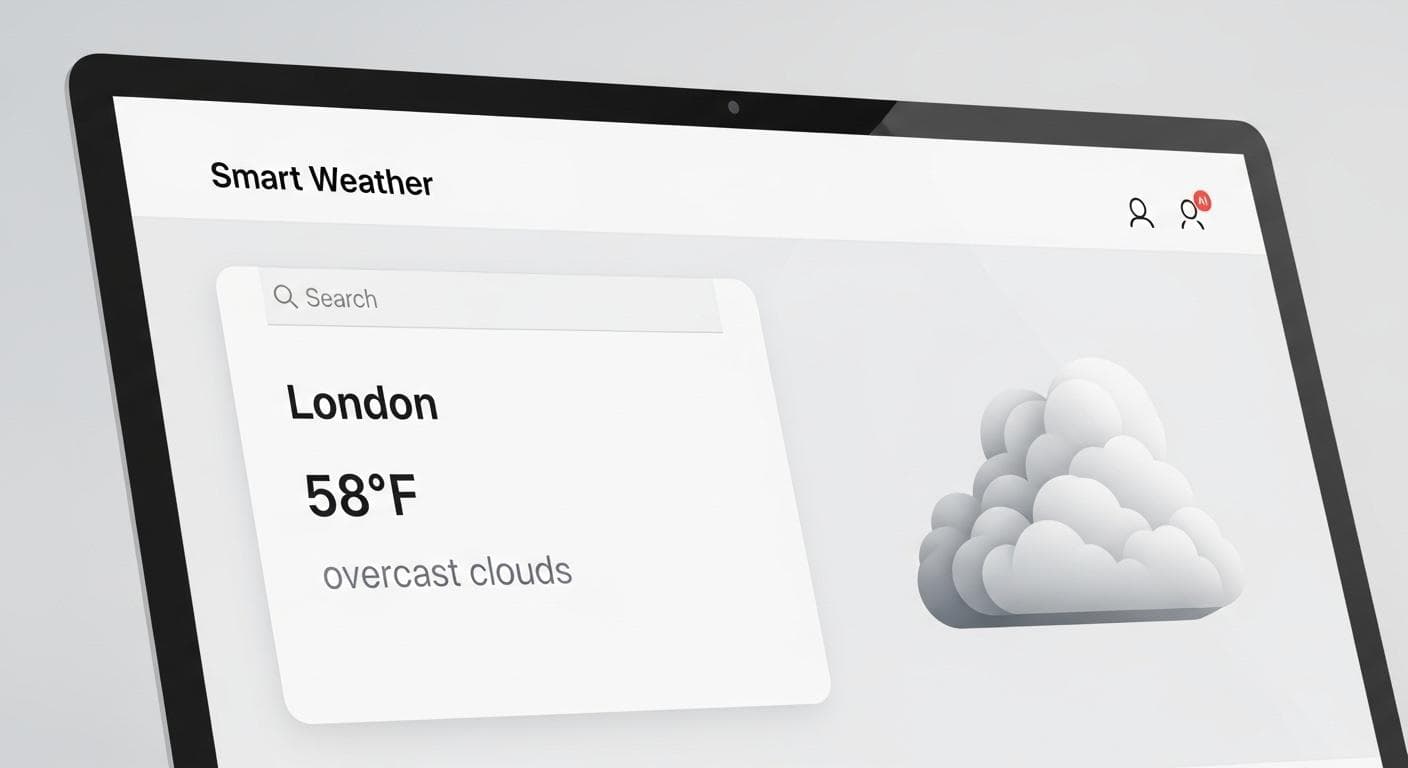

Project Blueprint: We're Building a "Smart Weather" App

To make this concrete, we're going to build a simple yet powerful application from scratch. This project will serve as the definitive, end-to-end tutorial for this modern workflow. For managing complex projects and ensuring smooth execution, consider mastering development artifacts like task lists and implementation plans.

The Goal: A simple dashboard where a user enters a city, and the app displays the current weather conditions (e.g., "Clear, 75°F") alongside a unique, AI-generated icon that visually represents those exact conditions.

Our Toolkit: We'll use a popular and robust stack perfect for this task.

- Next.js: A React framework that makes building full-stack applications intuitive and efficient.

- A Free Weather API: We'll use OpenWeatherMap for its reliability and generous free tier.

- An AI Image API: We'll integrate with a service like Stability AI or OpenAI to generate our weather icons.

Phase 1: Fetching Reality - Connecting to a Live Weather API

First, we need to get real-world data into our application. This involves choosing a provider, securing our credentials, and writing the code to make the request.

Step 1: Choosing Your Data Source (e.g., OpenWeatherMap API)

Head over to a weather API provider like OpenWeatherMap, sign up for a free account, and navigate to your dashboard to find your API key. This key is a unique string that identifies you and authorizes your requests. Guard it carefully!

Step 2: Securing Your Keys (The Right Way with .env.local)

Never, ever hard-code your API keys directly into your source code. This is a massive security risk. Instead, use environment variables. In a Next.js project, create a file named .env.local in your project's root directory.

Inside this file, add your API keys:

OPENWEATHER_API_KEY=your_actual_api_key_here

AI_IMAGE_API_KEY=your_stability_or_openai_key_here

.env.local is in your .gitignore file).

Step 3: Making the API Call and Understanding the JSON Response

We'll create an API route within Next.js to handle the communication with the weather service. This acts as a proxy, protecting our API key from being exposed to the client's browser.

Create a file at pages/api/weather.ts:

import type { NextApiRequest, NextApiResponse } from 'next';

export default async function handler(req: NextApiRequest, res: NextApiResponse) {

const { city } = req.query;

const apiKey = process.env.OPENWEATHER_API_KEY;

if (!city) {

return res.status(400).json({ error: 'City is required' });

}

try {

const response = await fetch(

`https://api.openweathermap.org/data/2.5/weather?q=${city}&appid=${apiKey}&units=imperial`

);

const data = await response.json();

if (response.ok) {

res.status(200).json(data);

} else {

res.status(response.status).json({ error: data.message });

}

} catch (error) {

res.status(500).json({ error: 'Failed to fetch weather data' });

}

}

city as a query parameter, securely calls the OpenWeatherMap API using our environment variable, and forwards the JSON response back to our front end.

![A visual representation of a JSON response from a weather API, with key fields like 'main.temp', 'weather[0].description', and 'name' highlighted with glowing boxes.](/static/images/posts/integrating-apis-assets-anti-gravity-json-response-highlight.jpg)

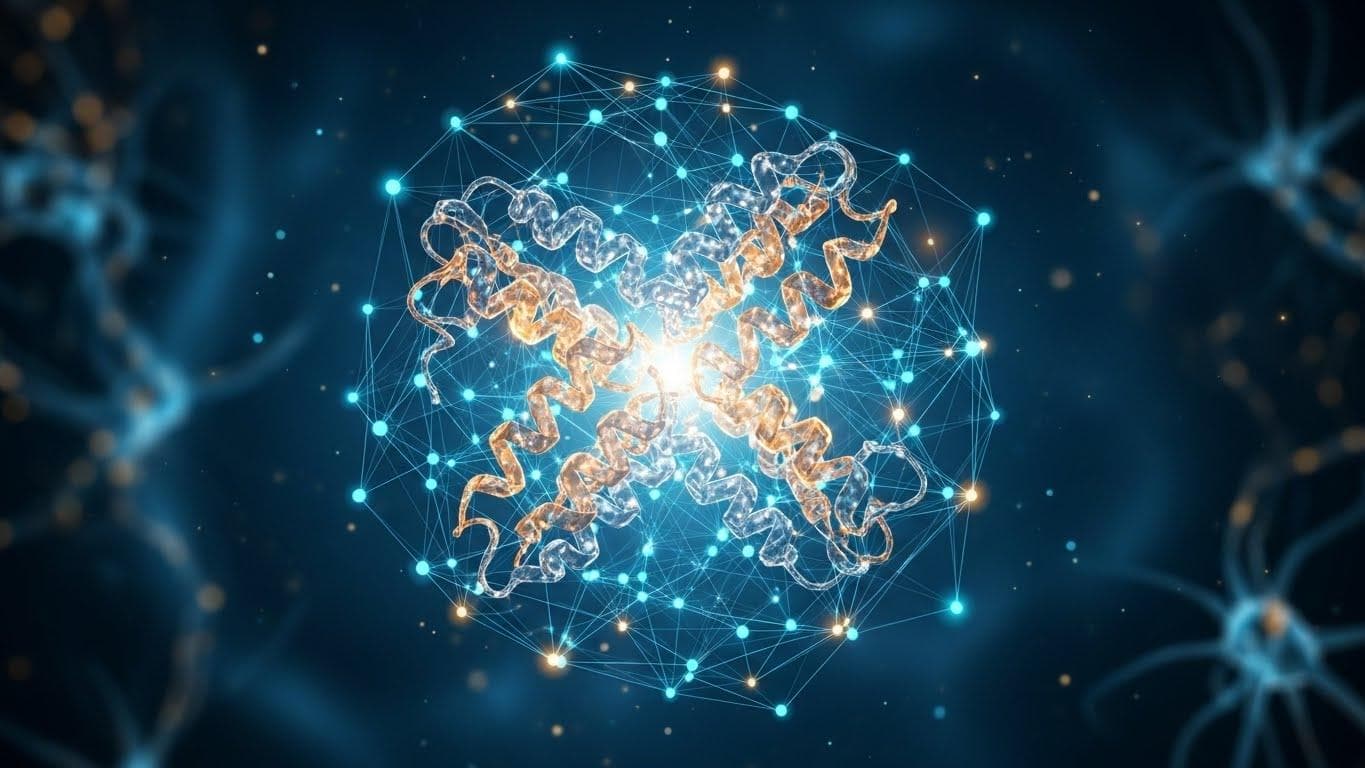

Phase 2: Generating Originality - Creating Assets with AI

Now for the creative part. We'll set up a similar API route to communicate with our chosen AI image generation service.

Step 1: Choosing Your AI Image Service

Services like Stability AI and OpenAI (with DALL-E) offer powerful APIs for image generation. You can interact with them directly via their developer platforms, or through third-party services that build on their models, such as ClipDrop by Jasper. Sign up for your chosen service, get your API key, and add it to your .env.local file as we did before.

Step 2: The Art of the Prompt: How to Ask for Usable UI Assets

Prompt engineering is key to getting good results. We don't want a photorealistic masterpiece; we want a clean, usable UI icon. A good prompt for this use case might be:

"Minimalist vector icon of a bright sun with clear blue sky, flat design, simple, on a white background."

This prompt is specific, defining the style (minimalist vector, flat design), the subject (sun, clear sky), and the presentation (white background). This level of detail guides the AI to produce a consistent and appropriate asset.

Step 3: Code Deep Dive: Writing a Function to Generate an Image

Let's create another API route at pages/api/generateIcon.ts. The exact code will vary based on your chosen AI provider's SDK, but the concept is the same.

import type { NextApiRequest, NextApiResponse } from 'next';

// This is a conceptual example. Replace this with the official client

// for your service, e.g., 'openai' for OpenAI or '@stability/sdk' for Stability AI.

import { SomeAIImageClient } from 'ai-image-sdk';

const aiClient = new SomeAIImageClient(process.env.AI_IMAGE_API_KEY);

export default async function handler(req: NextApiRequest, res: NextApiResponse) {

const { prompt } = req.body;

if (!prompt) {

return res.status(400).json({ error: 'Prompt is required' });

}

try {

const response = await aiClient.generate({

prompt: prompt,

style: 'icon',

size: '512x512'

});

const imageUrl = response.images[0].url;

res.status(200).json({ imageUrl });

} catch (error) {

res.status(500).json({ error: 'Failed to generate image' });

}

}

Phase 3: The Grand Integration - Making the Two Systems Talk

This is where the magic happens. We'll build a simple front-end UI and write the logic that connects our weather data directly to our AI image generator.

Step 1: Building the Basic UI to Display Weather Data

In your main page file (e.g., pages/index.tsx), create a simple form and state variables to hold the city input, weather data, and the generated image URL.

// In pages/index.tsx

import { useState } from 'react';

export default function HomePage() {

const [city, setCity] = useState('');

const [weather, setWeather] = useState(null);

const [iconUrl, setIconUrl] = useState('');

const [loading, setLoading] = useState(false);

// ... form submission logic will go here

return (

<div>

<h1>Smart Weather Dashboard</h1>

<form onSubmit={handleSubmit}>

<input

type="text"

value={city}

onChange={(e) => setCity(e.target.value)}

placeholder="Enter a city"

/>

<button type="submit" disabled={loading}>

{loading ? 'Thinking...' : 'Get Weather'}

</button>

</form>

{weather && (

<div>

<h2>{weather.name}</h2>

<p>{weather.weather[0].description}, {Math.round(weather.main.temp)}°F</p>

{iconUrl && <img src={iconUrl} alt="AI generated weather icon" />}

</div>

)}

</div>

);

}

Step 2: The "Aha!" Moment: Using Weather Data to Dynamically Generate a Prompt

Inside your form's submit handler is where we bridge the two worlds. After fetching the weather, we'll use its description to create a custom prompt for the AI.

const handleSubmit = async (e) => {

e.preventDefault();

setLoading(true);

setWeather(null);

setIconUrl('');

// 1. Fetch weather data

const weatherResponse = await fetch(`/api/weather?city=${city}`);

const weatherData = await weatherResponse.json();

if (weatherResponse.ok) {

setWeather(weatherData);

// 2. Dynamically create the AI prompt

const description = weatherData.weather[0].description;

const temp = Math.round(weatherData.main.temp);

const dynamicPrompt = `Minimalist vector icon of ${description} at ${temp}°F, flat design, simple, clean UI element, on a white background.`;

// 3. Call our image generation API

const iconResponse = await fetch('/api/generateIcon', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({ prompt: dynamicPrompt }),

});

const iconData = await iconResponse.json();

if (iconResponse.ok) {

setIconUrl(iconData.imageUrl);

}

}

setLoading(false);

};

Step 3: Putting It All Together

With the code above, the entire flow is complete. The user enters a city. Your app fetches live weather data. It then uses that data to craft a highly specific prompt, asks the AI to generate a unique icon, and displays both the data and the custom-made visual to the user. You've created a truly dynamic, context-aware experience.

Beyond the Tutorial: Common Pitfalls and Next Steps

This workflow is incredibly powerful, but it's important to consider real-world production challenges.

- Mind the Meter: Understanding API Costs: Generative AI APIs are rarely free. Calls are often priced per image generated, and costs can add up quickly during development and in production. Always check the pricing model for your chosen service and set up budget alerts. This financial reality is the single biggest motivation for implementing smart caching.

- Handling API Rate Limits and Errors Gracefully: What happens if an API is down or you exceed your request limit? Implement robust error handling (try-catch blocks) and display user-friendly messages. Never let a failed API call crash your entire application.

- Caching Strategies for AI Assets to Reduce Cost and Latency: AI image generation can be slow and expensive. If you get a request for "sunny in Los Angeles," chances are you'll get another one soon. Implement a caching layer (like Redis or a simple database) to store generated images for common prompts. Before calling the AI API, check if you've already generated an image for that exact prompt. Here's a conceptual example for your API route:

// Pseudo-code for a cached API route const cachedImageUrl = await redis.get(prompt); if (cachedImageUrl) { // If found in cache, return it immediately! return res.status(200).json({ imageUrl: cachedImageUrl, source: 'cache' }); } else { // If not in cache, call the expensive AI API const response = await aiClient.generate({ prompt }); const newImageUrl = response.images[0].url; // Store the new image URL in the cache for next time await redis.set(prompt, newImageUrl, 'EX', 3600); // Cache for 1 hour return res.status(200).json({ imageUrl: newImageUrl, source: 'api' }); } - Expanding the Concept: This doesn't stop with images. You could use live financial data to feed a language model to generate a market summary, or use user location data to generate personalized travel itinerary suggestions. The possibilities are endless.

Conclusion: You've Built a Truly Modern Application

By following this guide, you've done more than just build a weather app. You've mastered a foundational workflow for the next generation of software development. You've learned how to harness the reliability of live data and fuse it with the limitless creativity of generative AI. This combination is not a fleeting trend; it is the new standard for creating engaging, personalized, and intelligent applications.

Now that you have the blueprint, the real question is: what will you build? What live data can you connect to what creative output? The canvas is blank, and your new toolkit is ready.

What other kinds of applications do you think would be transformed by this combination of live APIs and generative AI? Share your most creative ideas in the comments below!

Comments

We load comments on demand to keep the page fast.