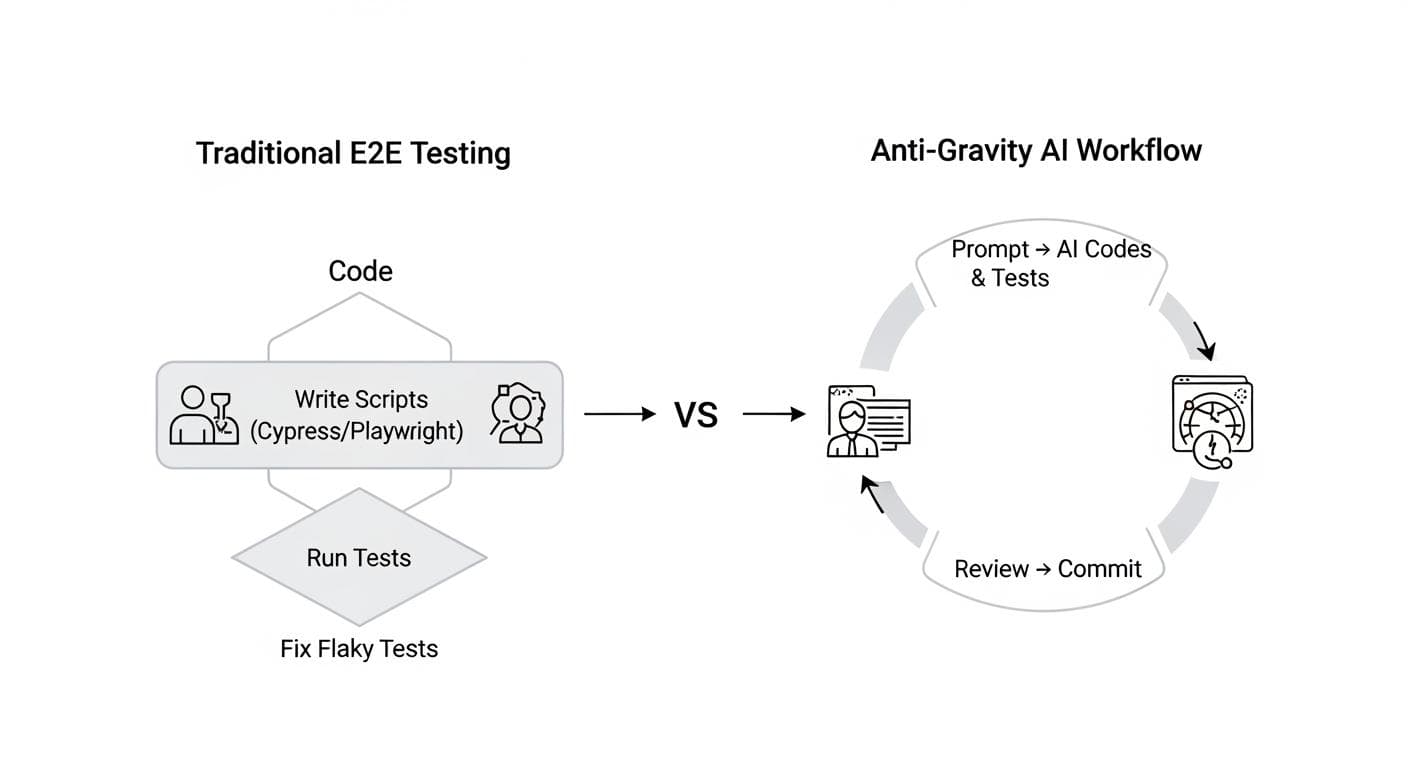

For any seasoned developer, the phrase "end-to-end testing" often triggers a cascade of unpleasant memories: late nights hunting down flaky tests, brittle selectors that break with the slightest UI change, and a maintenance overhead that feels like a second, shadow project running alongside the real one. For years, frameworks like Cypress and Playwright have been the best tools for the job, but they still require a developer to manually write, debug, and maintain a separate suite of test scripts. This creates a painful wall between building a feature and verifying it works.

But what if that wall could be completely demolished? What if testing wasn't a separate, subsequent step, but an intrinsic part of the development process itself? Google DeepMind's new project, Anti-Gravity, introduces a concept that feels like it's pulled from science fiction: an AI agent that not only writes the code for a feature but then opens a browser and tests its own work, just like a human would.

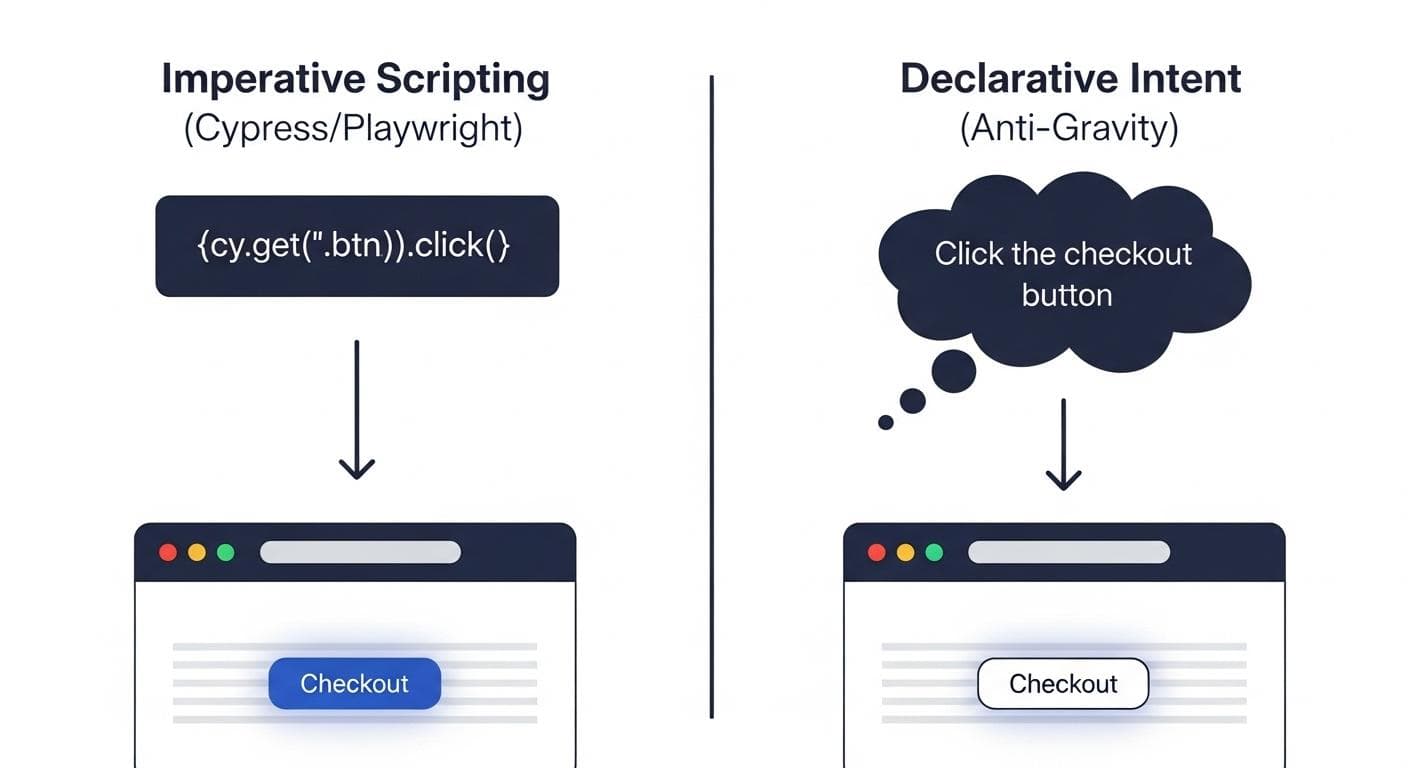

This isn't just about generating test scripts faster. It's a fundamental paradigm shift in how we think about software verification, moving from imperative scripts to declarative intent, and turning the browser itself into an active, intelligent participant in the development loop.

Why Traditional E2E Testing is a Developer's Nightmare

To understand the magnitude of Anti-Gravity's approach, we first need to appreciate the deep-seated problems with the status quo. Traditional automated E2E testing, for all its benefits, is a constant source of friction for development teams.

The Brittleness of Selectors and the Pain of Maintenance

The most common failure point for E2E tests is the selector-the id, class, or XPath used to find an element on a page. A simple class name change by a developer, a refactoring of the component tree, or a dynamic element added by a new feature can instantly break a dozen tests. This leads to a constant, reactive cycle of fixing tests that have failed not because the functionality is broken, but because the underlying implementation details have changed. This maintenance burden slows down development velocity and erodes trust in the test suite.

The Wall Between Development and QA

Historically, a division exists between writing application code and writing test code. This can lead to a siloed mentality where testing is something that happens after development is "done." This disconnect means developers might not consider testability when building features, and testers might lack the full context of the code they're verifying. The result is a process that feels more like a handoff than a collaboration, adding delays and communication overhead to every release cycle.

A Paradigm Shift: Testing as an Integrated Workflow

Anti-Gravity proposes a radical solution by dissolving these boundaries. It's an integrated platform combining three core components:

- The Agent Manager: A high-level dashboard to create and manage multiple AI agents working on different tasks in parallel.

- The Editor: A full-featured, AI-powered code editor where a developer can step in at any time to collaborate with the agent, review code, or take over complex tasks.

- The AI Browser: A Chromium-based browser with an AI agent baked in, capable of understanding instructions, navigating web pages, and performing actions like clicking, typing, and scrolling.

This trifecta enables a workflow where development and testing are not separate events but a single, fluid conversation between the developer and the AI. Instead of writing code and then writing tests, the developer simply describes the desired outcome, and the AI handles both implementation and verification in one continuous motion.

How It Works: From a Simple Prompt to a Verified Feature

The process inside Anti-Gravity looks fundamentally different from a traditional development cycle. It all starts with a natural language prompt. The developer doesn't write a line of code; they describe the feature's requirements in plain English:

"Build me a flight lookup Next.js web app where the user can put in a flight number and the app gives you the start time, end time, time zones, start location, and end location of the flight. For now, use a mock API... Display the search results under the form input."

Once the prompt is given, the AI agent takes over in a transparent, verifiable process.

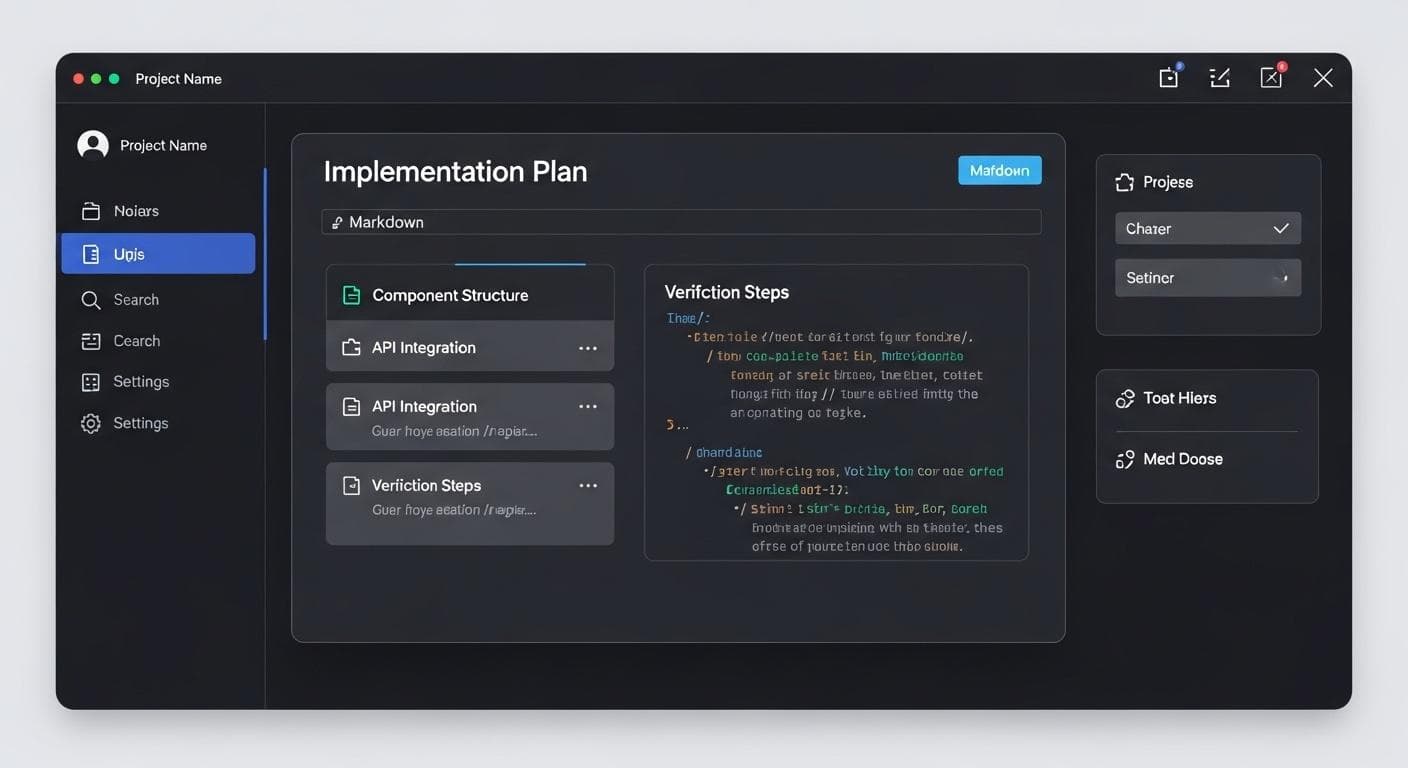

Step 1: The Plan - Creating Trust Through Transparency

The agent doesn't just blindly start writing code. It first creates an "artifact"-a markdown document called an Implementation Plan. This document outlines its understanding of the request, the components it plans to create, and, crucially, the steps it will take to verify its work. This gives the developer a clear checkpoint to review the AI's strategy and provide feedback before a single file is modified.

Step 2: Generation and Self-Verification

Once the plan is approved, the agent generates the necessary Next.js code. But this is where the magic happens. After running the local dev server, the agent's next instruction is to test the feature. It spawns the AI-powered browser, which automatically opens the new web app. A blue border appears, indicating it's under AI control. A glowing blue cursor moves autonomously, locating the input field, typing "American Airlines 123," clicking search, and inspecting the results. It even tests invalid states to see how the application responds.

Step 3: The Walkthrough - Reporting the Results

After completing its tests, the agent compiles its findings into a final artifact: the Walkthrough. This report summarizes the features it implemented and, most importantly, details how it verified them. It includes screenshots and recordings of its browser session, giving the developer a fully-coded feature and a verification report proving that it works as intended.

How Anti-Gravity Redefines the Game (vs. Cypress & Playwright)

The core difference between Anti-Gravity and traditional frameworks like Cypress or Playwright lies in a simple but profound shift: from imperative scripting to declarative intent. Traditional tools require you to tell them how to test. You write specific, step-by-step instructions tied to the UI's structure:

cy.get('button[data-testid="submit-form"]').click()

This command is brittle. If a developer changes data-testid to data-test-id, the test breaks, even if the button's function is unchanged. Anti-Gravity, by contrast, only needs to know what you want to test. The AI agent, with its understanding of the application's context, can interpret a command like "Test the full checkout flow" or "Submit the form."

It doesn't rely on fragile selectors. Instead, it perceives the page like a human, identifying a "submit button" based on its text, placement, and function. This makes tests resilient to minor UI and code changes, drastically reducing maintenance and allowing the test suite to focus purely on user-facing functionality.

The Road Ahead: Potential Limitations and Considerations

While this agent-assisted workflow is revolutionary, it's important to approach it with a balanced perspective. The technology is still nascent, and there are practical considerations and potential limitations to keep in mind:

- Handling Ambiguity: An AI might struggle with highly complex or ambiguous UIs where the 'correct' action isn't obvious. Human testers excel at this kind of exploratory testing, and the role of verifying the AI's interpretation remains critical.

- The 'Black Box' Problem: When a test fails, understanding *why* the AI made a certain decision can be more complex than debugging a line of code. Transparent logs and reviewable 'thought processes' will be essential for trust and adoption.

- Cost and Speed: Running E2E tests via LLM agents could be slower and more computationally expensive than executing lean, optimized test scripts, especially for large test suites in a CI/CD pipeline.

The Evolving Role of the QA Engineer

The rise of tools like Anti-Gravity doesn't mean the end of QA engineers. Instead, it signals an evolution of the role, shifting focus from manual scriptwriting to a more strategic, architectural function. In this new paradigm, a QA professional's value comes from their ability to think critically about the product and user experience.

Future QA roles might involve:

- Designing high-level test strategies: Defining the critical user journeys, edge cases, and success criteria that AI agents should verify.

- Prompt Engineering for Testing: Crafting the precise natural language prompts that guide the AI to perform complex, multi-step E2E tests (e.g., "Test the full checkout flow for a new user with an invalid credit card, ensuring the correct error message is displayed.").

- Analyzing AI-generated test reports: Reviewing the walkthroughs and verification artifacts to identify gaps in test coverage or subtle bugs the AI might have missed.

This approach elevates the QA function from tedious implementation to high-impact strategic oversight, allowing humans to focus on creative problem-solving while delegating the repetitive execution to AI. By integrating testing directly into the act of creation, Anti-Gravity's AI-powered browser points to a future where we spend less time fixing brittle tests and more time building reliable, high-quality software.

The era of manually scripting cy.get('#user-email').type('test@example.com') may be drawing to a close. The future is simply telling your AI partner, "Test the login flow," and watching it happen.

What is the single biggest frustration you face with your current E2E testing process? Share your story in the comments below!

Comments

We load comments on demand to keep the page fast.