The world of software development is on the cusp of a monumental shift. For years, AI coding assistants like GitHub Copilot have acted as super-powered autocompletes, helping developers write code faster. But what if an AI could do more than just suggest the next line? What if it could understand a complex task, formulate a plan, write the code, test the result, and report back, all while you focus on the bigger picture? This is the promise of agent-assisted development, and Google DeepMind's new platform, Anti-Gravity, is a comprehensive attempt to make it a reality.

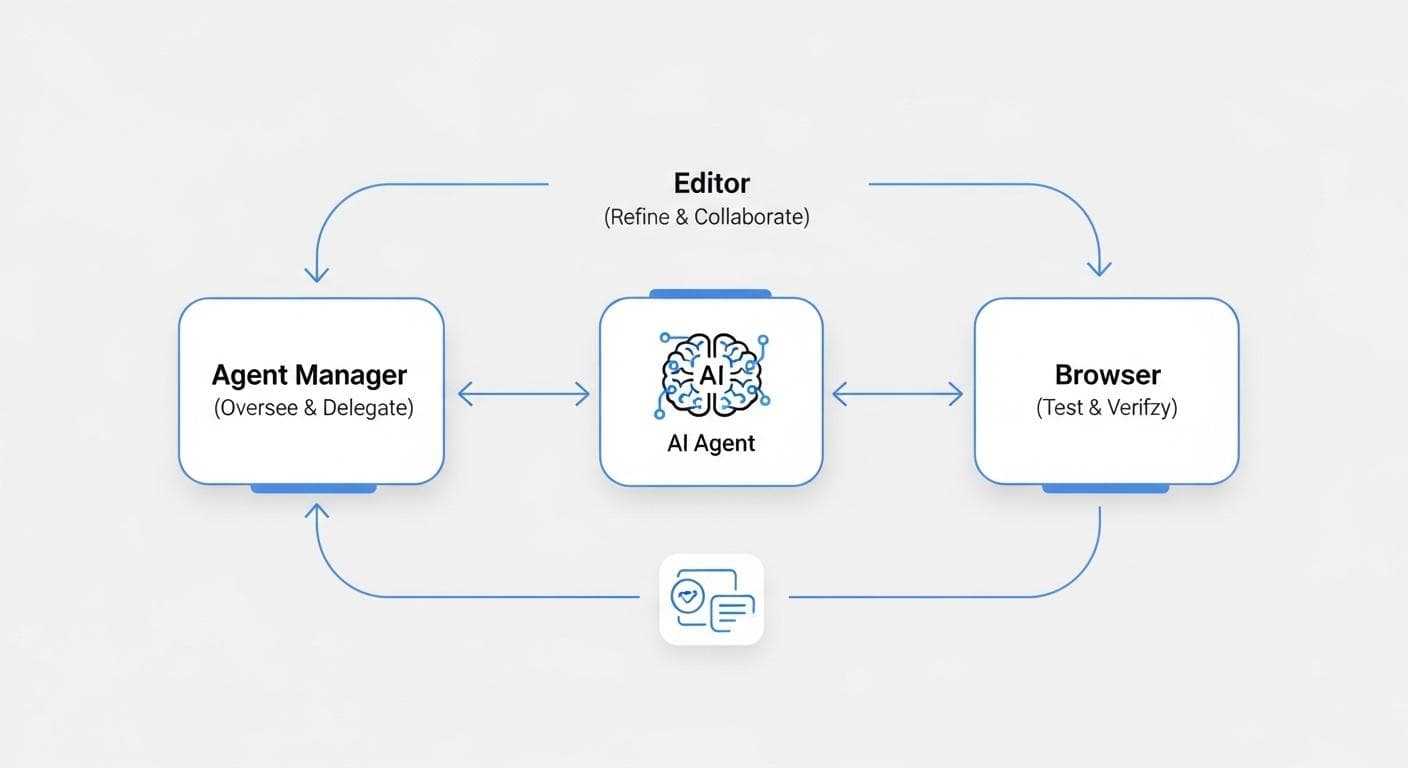

Anti-Gravity isn't just an editor or a chatbot; it's a fully integrated development environment built around a powerful AI agent. It’s designed from the ground up to handle entire tasks, from initial concept to final verification. To achieve this, it combines three distinct but deeply interconnected components: the Agent Manager, the Editor, and the Browser. Understanding how these three pieces work in concert is the key to grasping the new development paradigm Anti-Gravity proposes.

What is Google's Anti-Gravity? The New Paradigm of Agent-Assisted Development

Before diving into the components, it's crucial to understand the core philosophy. Traditional AI tools are reactive; you write code, and they suggest completions. Anti-Gravity is proactive. You give it a high-level goal-like "Build a flight tracker web app"-and the AI agent takes ownership. It thinks, plans, executes, and even asks for clarification when needed. For a more complete overview of the platform, refer to our complete guide to Google DeepMind's Anti-Gravity.

This workflow is built on a model of trust and transparency. The AI isn't a black box. At every stage, it produces human-readable "Artifacts" that explain its strategy and prove its work. This allows the developer to act as a project lead or a reviewer, delegating tasks to the AI agent and stepping in only when their expertise is truly needed. The goal isn't to replace the developer, but to elevate them, allowing them to operate at a higher level of abstraction and manage multiple complex tasks in parallel.

Component 1: The Agent Manager - Your AI Project Command Center

The Agent Manager is the heart of Anti-Gravity. It's the mission control where you define tasks, manage workspaces, and oversee all your AI agents. Unlike a simple command line or a chat window, the Manager is a persistent space that keeps track of every project and every agent's progress.

More Than a Task Runner

Think of the Agent Manager as a C-suite for your AI workforce. It's not just a place to issue a one-off command. It’s where you can spin up multiple agents for different projects, or even assign multiple agents to work on different aspects of the same project simultaneously. For example, you could have one agent researching an API while another designs a logo, all within the same workspace. This ability to run tasks in parallel is a core strength of the platform, enabling a "multi-threaded" workflow that a human developer could never achieve alone, and is crucial for accelerating development with parallel AI agents in Anti-Gravity.

Key Functions of the Central Hub

The main interface, sometimes called the "inbox," provides a consolidated view of all ongoing work. Each conversation thread represents a task assigned to an agent. You can check in on progress, review generated artifacts, and provide feedback without ever leaving this central hub. This is also where you set the ground rules for agent autonomy. You can configure an agent to run commands automatically for simple tasks or to prompt for approval on more sensitive operations, building a trusted partnership over time.

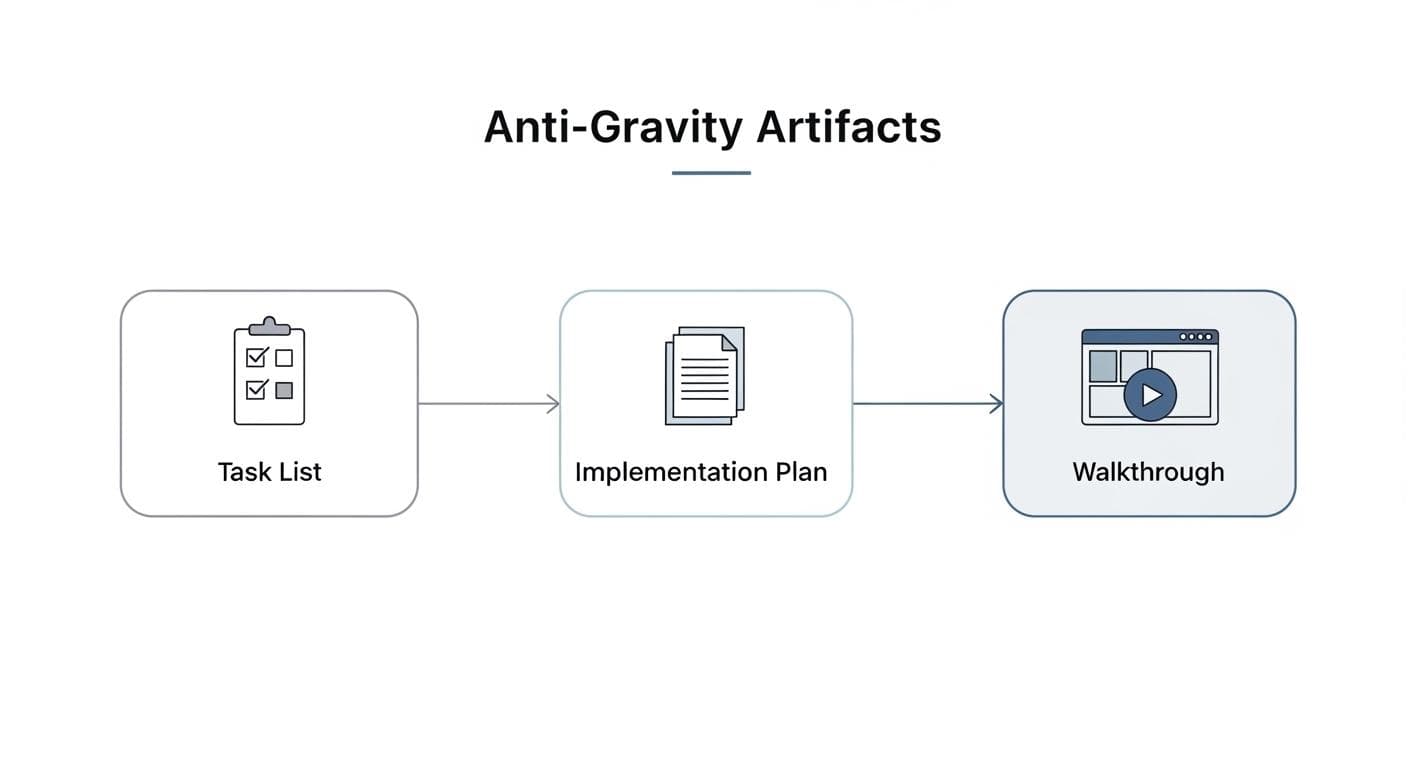

The Secret Sauce: Understanding Anti-Gravity "Artifacts"

Perhaps the most innovative concept within Anti-Gravity is its use of "Artifacts." For a deeper dive into these powerful documentation tools, explore our article on mastering Anti-Gravity Artifacts: Task Lists, Implementation Plans, and Walkthroughs. These are structured markdown files generated by the agent to document its process. They are the primary mechanism for building trust and ensuring the developer remains in control. There are three main types.

The Implementation Plan: The Agent's Strategy Blueprint

Before making any significant changes to your codebase, the agent first does its research and produces an Implementation Plan. This document outlines its understanding of the problem, the approach it plans to take, the files it will create or modify, and how it will verify success. It's the equivalent of a design document, giving you a chance to review the agent's logic and make corrections before a single line of code is written. You can even leave comments directly on the plan, just like in a Google Doc, to guide the agent, a key aspect of human-AI collaboration in the Anti-Gravity Editor.

The Walkthrough: The Agent's Proof of Work

After a task is complete, the agent generates a Walkthrough. This is the final report that proves the job was done correctly. It summarizes the features implemented and, crucially, includes the evidence of verification. This might include screenshots from the automated browser test, terminal command outputs, or even a generated pull request description. The Walkthrough closes the loop, providing concrete evidence that the task not only got done, but works as expected. A third artifact, the Task List, is a simple to-do list the agent maintains for itself, giving you a real-time, granular view of its progress.

Component 2: The Editor - Where Human and AI Collaboration Shines

While the Agent Manager is for high-level direction, the Anti-Gravity Editor is for getting your hands dirty. At any point, you can take over from the agent and dive into the code. This isn't just a standard IDE with AI features bolted on; it's a coding environment built specifically for the human-AI hand-off. To learn more about optimizing your interaction within this environment, read our guide on human-AI collaboration using the Anti-Gravity Editor and commenting on agent plans.

A Deeply Context-Aware IDE

The Editor has all the features you'd expect, like syntax highlighting and powerful autocomplete. But its real power comes from its deep context awareness. Because it's integrated with the Agent Manager, it knows the full history of the task-the initial prompt, the research, the implementation plan, and the agent's conversational history. This allows it to provide incredibly relevant suggestions. When you start typing, it doesn't just guess the next token; it understands the entire goal and suggests entire blocks of code to complete the agent's work.

The Human-AI Hand-off: Taking a Task from 90% to 100%

This is where Anti-Gravity truly shines. An AI agent can often get a task 90% of the way there, handling the boilerplate and standard implementation. But often, that last 10% requires human intuition, specific domain knowledge, or nuanced refinement. The Editor is designed for this exact moment. You can let the agent do the heavy lifting of, for example, fetching data from a new API. Then, you can jump into the editor to seamlessly integrate that new data into your existing complex UI, using the AI's context-aware suggestions to accelerate the final integration. This process is similar to integrating live APIs and AI-generated assets into your project.

Component 3: The Browser - Closing the Loop with Automated Testing

The final piece of the puzzle is the Browser. This is an agent-controlled instance of Chrome that allows Anti-Gravity to move beyond just writing code into the realm of verifying it, a process detailed further in our article on automated E2E testing with Anti-Gravity's AI-powered browser. This closes the loop on the development lifecycle, providing a powerful way to ensure the code the agent writes actually works in a real-world environment.

An Agent-Controlled Chrome Instance

When a task involves a user interface, you can instruct the agent to test its work. Anti-Gravity will launch its integrated browser, often identifiable by a visual cue like a blue border to indicate agent control. The AI can then interact with the web app just like a human would: it can move the cursor, click buttons, fill out forms, and scroll through pages. This isn't a brittle, script-based test-it's a dynamic interaction with the live application.

From Code Generation to True Verification

This capability is a game-changer. In our flight tracker example, after the agent builds the initial UI, it can launch the browser, type a flight number into the input field, click the search button, and verify that the results appear correctly. The screenshots and screen recordings from this session are then automatically included in the Walkthrough artifact. This provides undeniable proof that the feature works end-to-end, dramatically increasing confidence and reducing the need for manual QA on routine tasks.

The Unified Workflow: A Practical Example from Start to Finish

To see how these components create a single, powerful system, let's walk through the flight tracker app example: For a step-by-step tutorial on building a similar application, check out our guide on building your first app with Anti-Gravity.

- Step 1 (Agent Manager): The developer gives a high-level prompt: "Build a Next.js flight tracker app with a mock API." The agent accepts the task, generates an Implementation Plan, and, upon approval, scaffolds the entire project.

- Step 2 (Agent Manager & Browser): The agent finishes the initial code and uses the integrated Browser to test the form input, recording the results for its Walkthrough artifact.

- Step 3 (Agent Manager & Editor): The developer assigns a new task: "Replace the mock API with a live flight data API." For more on this, see our guide on integrating live APIs and AI-generated assets. The agent researches the API and creates a utility file. The developer then opens the Editor to perform the final integration, using the AI's context-aware suggestions to quickly swap the mock data for the new live data types.

- Step 4 (Agent Manager & Browser): The developer's final task: "Make the flight results clickable to create a Google Calendar event." The agent implements the logic and once again uses the Browser to click a result card, verifying that it correctly opens a pre-filled calendar link. The final Walkthrough is delivered.

How Does Anti-Gravity Compare to Tools Like GitHub Copilot?

While tools like GitHub Copilot are excellent line-by-line coding partners, Anti-Gravity operates at a much higher level of abstraction. The key difference is the concept of agency.

- Copilot is a co-pilot: It sits next to you and offers suggestions while you fly the plane. It helps you type faster and smarter.

- Anti-Gravity is a pilot you delegate to: You tell it the destination, and it handles the takeoff, navigation, and landing, providing you with reports along the way. You remain the captain, ready to take the controls at any moment.

This makes Anti-Gravity less of a Copilot competitor and more of a new category of tool-an integrated AI developer environment focused on task automation rather than just code completion.

Embracing the Shift: Limitations and the Learning Curve

Adopting a tool like Anti-Gravity requires a mental shift. Developers accustomed to having their hands on the keyboard for every single change must learn to trust the agent and become comfortable with a review-and-approve workflow. This transition from 'doer' to 'director' is the primary learning curve. Early on, it might feel faster to just code a simple task yourself, but the real power emerges when you begin to manage multiple, complex agent tasks in parallel.

Furthermore, like any AI system, its effectiveness is highly dependent on the quality of the prompt. Learning to write clear, unambiguous, and well-scoped tasks is a new skill that developers will need to cultivate to get the most out of the platform.

Who is Anti-Gravity For? Ideal Use Cases and Supported Tech

While the technology is broadly applicable, certain scenarios are particularly well-suited for this new agent-assisted paradigm.

Ideal Project Types

Anti-Gravity excels at tasks with clear inputs and outputs. This includes:

- Rapid Prototyping: Scaffolding new applications or features from a high-level description.

- API Integration: Researching a new API, writing the client code, and integrating it into an existing application.

- Boilerplate and Repetitive Tasks: Setting up projects, writing unit tests, or performing straightforward code migrations.

- Data-Driven UI Components: Building components that fetch data from an endpoint and display it in a specified format.

Supported Languages and Frameworks

While constantly expanding, the system shows strong initial support for web development ecosystems, particularly those centered around JavaScript and TypeScript. Frameworks like Next.js, React, and Node.js are well-supported, as demonstrated by the platform's ability to build, run, and test a full-stack web application from scratch. Support for other languages like Python and Go, as well as mobile and backend frameworks, is a likely direction for future development.

Conclusion: The Future is a "Multi-Threaded" Workflow

Google DeepMind's Anti-Gravity is more than the sum of its parts. The true innovation isn't just the AI agent, the smart editor, or the automated browser; it's the seamless integration of all three into a cohesive platform. This synergy creates a novel workflow where a developer can offload entire streams of work to AI agents, allowing them to focus their own cognitive energy on the most challenging and creative aspects of software engineering.

This represents a fundamental shift from a single-threaded workflow, where a developer does one thing at a time, to a multi-threaded one, where they orchestrate multiple lines of work simultaneously. While still in its early days, Anti-Gravity provides a compelling glimpse into a future where developers are less like typists and more like conductors of a powerful AI orchestra.

What kind of complex, time-consuming task would you delegate to an AI agent in a tool like Anti-Gravity? Share your most ambitious ideas in the comments below!

Comments

We load comments on demand to keep the page fast.