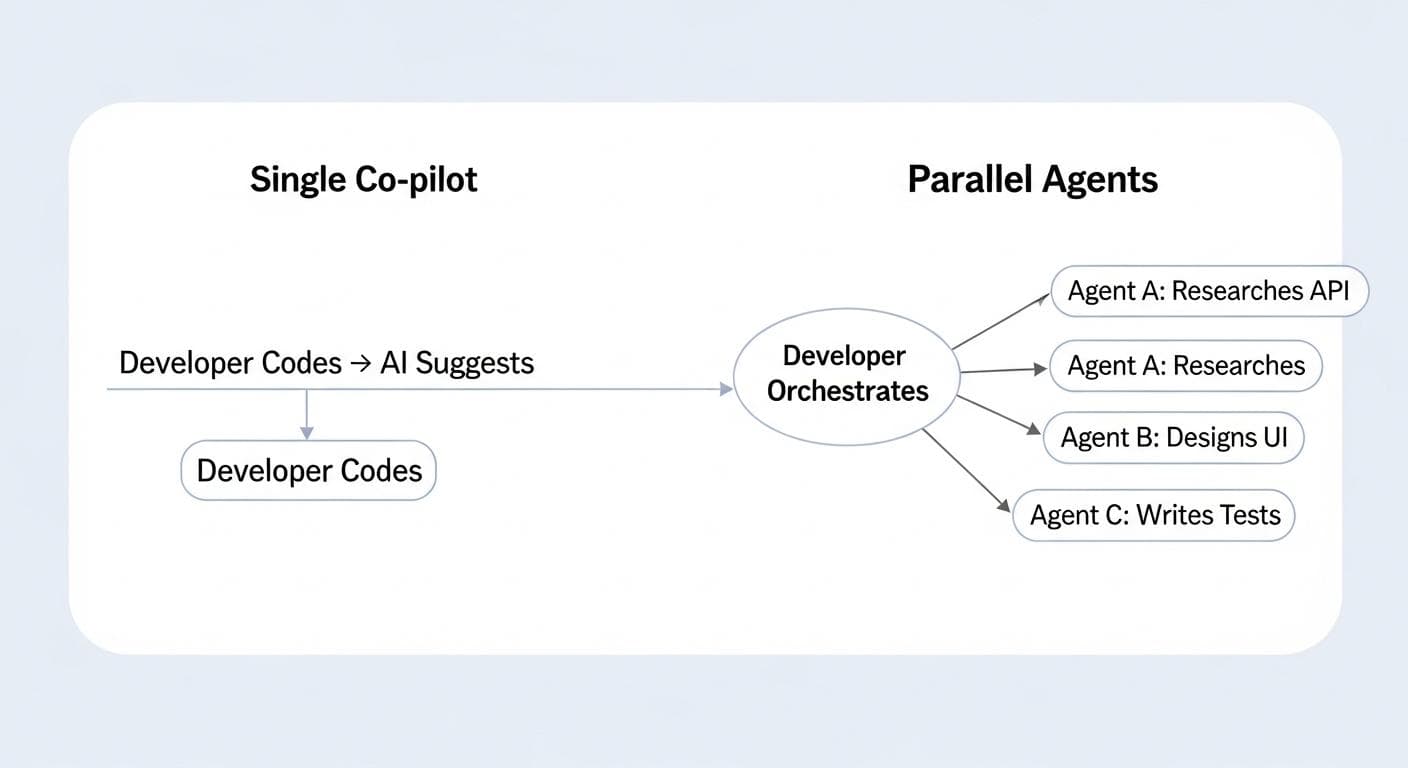

For the past few years, the life of a software developer has been quietly revolutionized by AI assistants. Tools like GitHub Copilot have become an indispensable second pair of hands, suggesting lines of code, completing functions, and turning natural language comments into boilerplate. This is the Co-pilot Paradigm: a powerful but fundamentally linear process where the developer writes, the AI suggests, and the developer accepts or rejects.

But what if this is just the beginning? What if, instead of a single co-pilot, you had an entire crew? A team of autonomous AI agents you could dispatch to work on multiple complex tasks in parallel, while you transitioned from a hands-on coder to a high-level orchestrator. This isn't science fiction; it's the next seismic shift in software engineering, and Google DeepMind's new environment, codenamed "Anti-Gravity," provides the first comprehensive look at this powerful future.

From Co-pilot to Crew: The Critical Shift in AI-Assisted Coding

The move from a single assistant to a team of parallel agents represents a fundamental change in how we approach the software development lifecycle (SDLC). It’s a transition from enhancing a single workflow to running multiple workflows concurrently.

The Bottleneck of a Single AI Assistant

Despite their power, single AI assistants operate within the developer's own linear workflow. You can't ask Copilot to research an API's documentation, design a logo, and scaffold a new feature at the same time. A developer still has to context-switch, waiting for one task to finish before starting the next. This serial process, even when accelerated, remains a significant bottleneck.

Defining the Parallel Workflow: One Developer, Multiple Autonomous Agents

The parallel workflow shatters this limitation. A developer defines the high-level goals, then delegates the component tasks to different specialized AI agents. One agent can dive into complex documentation, another can generate and iterate on design assets, while a third writes the foundational code. They work simultaneously, compressing timelines from days to hours, or hours to minutes.

Case Study: A Deep Dive into Google's "Anti-Gravity" Environment

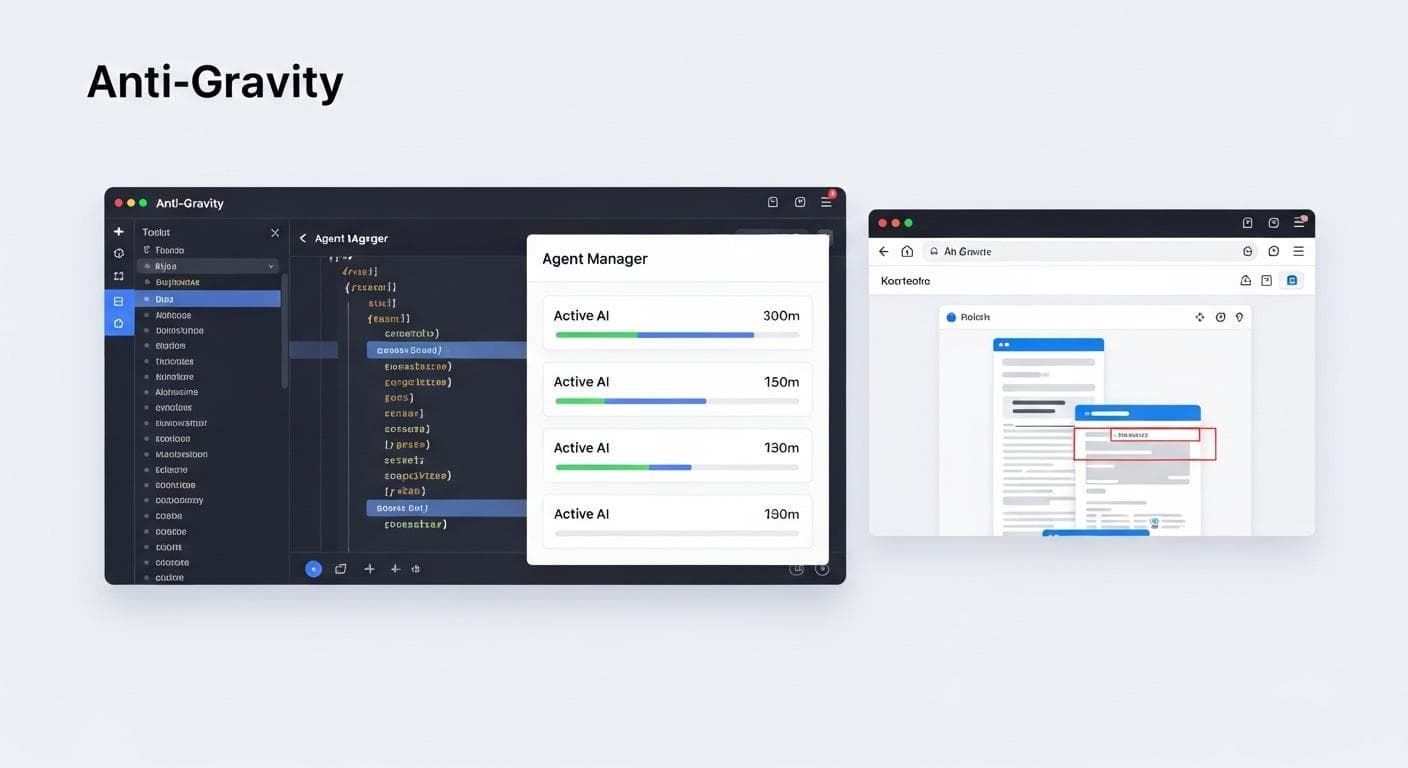

Anti-Gravity is more than just an editor; it's a complete development environment built from the ground up to support this new multi-agent paradigm. It's built on three core pillars designed to facilitate orchestration, execution, and verification.

The Three Pillars: The Editor, Agent Manager, and Integrated Browser

- The Agent Manager: This is the command center. From a single interface, a developer can create, deploy, and monitor multiple agents across different projects. It's the mission control from which you dispatch your AI crew.

- The AI-Powered Editor: When it's time to get hands-on, the Anti-Gravity editor provides a familiar environment supercharged with agentic capabilities. It's not just about code completion; the agent has full context of the project and the ongoing tasks, allowing it to provide highly relevant assistance for bridging the gap between 90% and 100% completion.

- The Integrated Browser: This closes the feedback loop. Agents can be tasked with not only writing code but also testing it in a real browser environment. The agent can navigate, click, type, and verify that the features it built work as intended, providing a level of autonomous quality assurance that was previously impossible.

The Trust Layer: How "Artifacts" Make Autonomous Work Observable

Handing over control to autonomous agents requires a profound level of trust. Anti-Gravity addresses this head-on with a system of "Artifacts"-structured, human-readable documents that agents generate throughout their lifecycle.

- Implementation Plan: Before writing a single line of code, the agent presents a detailed plan. It outlines its understanding of the task, the approach it will take, the files it will create or modify, and its verification strategy. The developer reviews and approves this plan, ensuring alignment before work begins.

- Task List: A real-time, self-updating to-do list that the agent maintains. This provides transparent progress tracking, allowing the developer to see exactly what the agent is working on at any given moment.

- Walkthrough: Upon completion, the agent delivers a summary report. This artifact details what was accomplished and, crucially, how it was verified. It might include screenshots, screen recordings of browser tests, or logs from terminal commands, offering concrete proof that the job is done correctly.

Walkthrough: Building a Flight Tracker App in Minutes with Parallel Agents

To see this paradigm in action, let's walk through the process of building a full-stack application from scratch using Anti-Gravity.

Task 1 (Foreground): Scaffolding the Core App with a Single Prompt

The process begins not with npx create-next-app@latest, but with a high-level prompt:

Build me a flight lookup Next.js web app where the user can put in a flight number and the app gives you the start time, end time, time zones, start location, and end location of the flight. For now, use a mock API.

An agent immediately gets to work, running the necessary terminal commands, scaffolding the Next.js project, and building the basic UI components. It generates an Implementation Plan, which is quickly approved, and within moments, a functional skeleton of the app is live on the dev server.

Task 2 & 3 (Parallel Background): Researching a Live API and Designing a Logo Simultaneously

Here, the magic of parallelism begins. While the first agent built the foundation, we dispatch two new agents with separate, concurrent tasks:

- Agent A (Research): "Look up the Aviation Stacks API documentation. I have an API key; use it to make test calls with curl and determine the data interface for live flight data."

- Agent B (Design): "Design a few different mockups for a logo for our app. I want minimalist, classic, and calendar-themed options. The final choice will be our favicon."

While a human developer would have to tackle these sequentially, the agents work at the same time. In the time it takes to get a coffee, the logo designs are ready for review, and the API research is complete, including sample curl responses and a typed interface ready to be dropped into the code.

The "Review & Redirect" Loop: Using Artifacts to Guide the Agents

After reviewing the generated logos, a choice is made. A simple comment is left for Agent B: "I like the classic aviation style. Add this as my favicon and update the site title." The agent intelligently picks up the feedback and implements the changes.

Simultaneously, the API research Implementation Plan is reviewed. It looks solid, but a small directive is added via a comment: "Great work. Please implement this in a 'utils' folder so I can integrate it into the route later. Do not change the route yet." The developer isn't writing code; they are providing high-level architectural guidance, much like a tech lead reviewing a junior developer's plan.

Merging Man and Machine: Taking Over in the Editor to Reach 100%

After guiding the agents with high-level feedback, the developer can seamlessly step in for the final, nuanced integrations where human expertise shines. Once Agent A has created the API utility file, it's time to integrate it. While another prompt could handle this, this is the perfect moment for the developer to step in. Opening the AI-powered editor, the developer deletes the old mock data logic. The editor, with full context, immediately suggests the correct code to import and call the new live data utility. A few taps of the Tab key, and the entire application is seamlessly migrated from mock data to a live API.

Closing the Loop: The Agent Tests Its Own Code in the Browser

With the core functionality in place, a final task is given: add Google Calendar integration. The agent is prompted to make each flight result clickable to generate a calendar event. Crucially, the prompt ends with: "Test this with the browser and show me a walkthrough when you are done."

The agent implements the feature, then launches the integrated browser. It automatically enters a flight number, clicks the result card, and verifies that the Google Calendar link opens with the correct pre-filled information. The entire process is recorded and presented back to the developer in a final Walkthrough artifact, providing undeniable proof of success. This process highlights the power of automated E2E testing with Anti-Gravity's AI-powered browser.

The Reality Check: Hurdles and Headsets in the New Paradigm

This new workflow is more than just a productivity boost; it's a fundamental re-architecting of the development process that comes with its own set of challenges and required shifts in mindset.

A New Workflow vs. An Enhanced Editor

It's important to distinguish this paradigm from existing tools. GitHub Copilot and AI-native IDEs like Cursor are powerful enhancements to the traditional coding loop. They make the act of writing code faster. Anti-Gravity, in contrast, aims to replace large parts of that loop with a higher-level cycle of prompt-review-approve. It's a shift from being a craftsman at the keyboard to being an architect directing a team of craftsmen.

Navigating the Paradigm Shift: Skills, Security, and Scrum

Adopting this model requires more than just new software; it requires new skills and processes.

- The Skill Shift: The most valuable engineering skills evolve. The focus moves from line-by-line coding proficiency to the ability to decompose complex problems into clear, unambiguous prompts. Prompt engineering, architectural oversight, and the critical evaluation of AI-generated plans become paramount. This new cognitive load is about strategic direction, not tactical implementation.

- Security and Trust: Giving AI agents autonomous access to a terminal and codebase is a serious consideration. The solution lies in robust, sandboxed environments where agents operate with the principle of least privilege. An agent tasked with UI design should have no access to production keys or the terminal. The "Artifacts" system provides a crucial, auditable trail of an agent's intentions and actions, but it must be paired with rigorous technical safeguards.

- Agile Integration: How does this fit into a Scrum sprint? Seamlessly. A user story can be the source of a high-level prompt. The agent-generated 'Implementation Plan' becomes a technical design document for review during backlog grooming. The final 'Walkthrough' serves as a perfect demonstration of a completed story during the Sprint Review, fulfilling the Definition of Done.

Conclusion: The Future of Development is Concurrent

The transition from single assistants to parallel agents marks the most significant leap in software development since the advent of the IDE. By enabling true concurrency, this new model promises to dissolve bottlenecks, compress timelines, and elevate the role of the developer to that of a strategic orchestrator. Environments like Anti-Gravity are the first step into this new world, one where the limiting factor is no longer typing speed, but the speed of thought.

This paradigm shift redefines productivity, transforming the developer from a sole builder into the conductor of a powerful digital orchestra. The future of software isn't just AI-assisted; it's AI-concurrent.

Which part of your current workflow would you delegate to a parallel agent first? The tedious research, the initial scaffolding, or the final testing? Share your thoughts in the comments below!

Comments

We load comments on demand to keep the page fast.