The world of software development is defined by paradigm shifts. We moved from assembly language to high-level languages, from monolithic architectures to microservices, and from local text editors to cloud-based IDEs. Each shift unlocked new levels of productivity and abstraction. Today, we stand on the precipice of the next great leap: agent-assisted development. And Google DeepMind's new tool, Anti-Gravity, isn't just a step forward-it's a vision of the future.

This guide will take you on a practical, hands-on journey to build your first application using Anti-Gravity. We'll go from an empty folder to a fully functional Next.js flight tracker application, complete with live API data, a custom logo, and Google Calendar integration. More importantly, you'll learn a new way of working, where your role evolves from a line-by-line coder to a high-level orchestrator of intelligent AI agents.

What is Google's Anti-Gravity? A New Paradigm for Developers

It's easy to dismiss a new tool as just another AI code assistant. But Anti-Gravity is fundamentally different from tools like GitHub Copilot or traditional IDE plugins. Those tools operate at the level of code completion; Anti-Gravity operates at the level of intent and execution.

Beyond Autocomplete: Introducing Agent-Assisted Development

Instead of suggesting the next few lines of code, you give an Anti-Gravity agent a high-level task, like "Build a flight lookup web app." The agent then takes on the entire workflow:

- Research: It can browse the web to find the best APIs or documentation.

- Planning: It creates a detailed "Implementation Plan" for your review.

- Execution: It writes code, runs terminal commands, and creates files.

- Testing: It can spin up a browser to interactively test the UI it just built.

- Reporting: It delivers a "Walkthrough" artifact summarizing its work and verification steps.

This isn't just about writing code faster; it's about automating the vast amount of "meta-work" that surrounds coding-the research, planning, testing, and documentation that consume a significant portion of a developer's day.

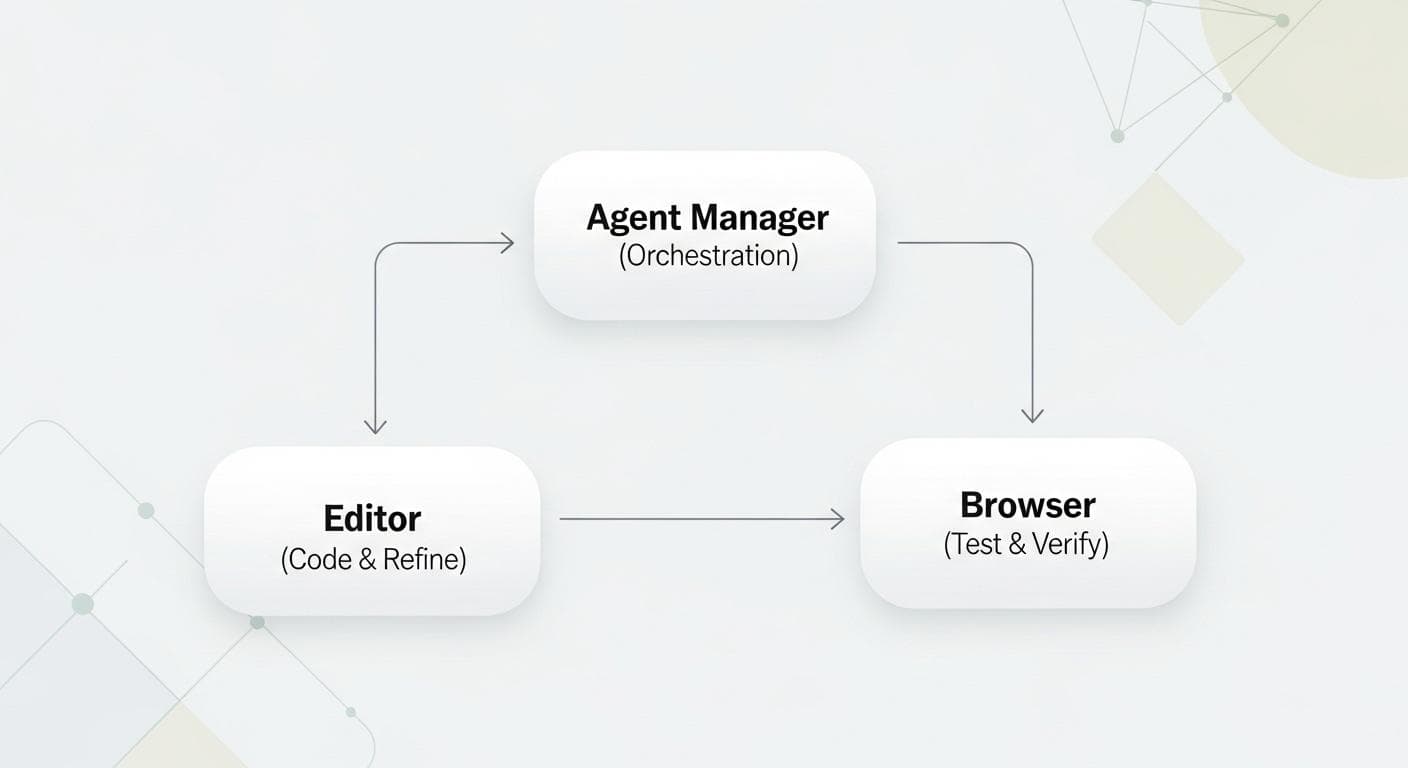

The Three Core Surfaces: Agent Manager, Editor, and Browser

Anti-Gravity integrates this entire workflow into a unified experience across three distinct but interconnected surfaces:

- The Agent Manager: Your central command center. Here, you create and manage agents, assign them high-level tasks, and review their progress across all your projects. It's the ultimate tool for orchestrating work.

- The Editor: A fully-featured, AI-powered code editor. When you need to get hands-on, you can seamlessly transition from the Agent Manager to the Editor. It includes powerful, context-aware autocompletion and gives you direct access to the agent for granular tasks.

- The Browser: An integrated, agent-controllable browser. This is a game-changer for automated testing. Agents can launch the browser, navigate your app, fill out forms, and click buttons to verify their own work, providing a closed-loop development cycle.

Project Overview: What We're Building

We'll be building a real-world application to showcase the power of this new paradigm. Our Flight Tracker will be a simple yet powerful web app that demonstrates the end-to-end capabilities of Anti-Gravity.

Features of Our Flight Tracker App

- A clean UI built with Next.js and React.

- An input form to search for flights by flight number.

- Integration with the live Aviation Stack API to fetch real-time flight data.

- Display of key flight details: start/end times, locations, and time zones.

- A custom, AI-generated logo and favicon.

- One-click functionality to add flight details directly to your Google Calendar.

Prerequisites

Before we begin, make sure you have the following set up:

- Node.js: Ensure you have Node.js (version 18.x or later) installed.

- Google Account: You'll need a standard Google/Gmail account to sign in to Anti-Gravity.

- Aviation Stack API Key: Head to [AviationStack.com](https://aviationstack.com/) and sign up for a free API key. We'll need this later to fetch live flight data.

Step 0: Setting Up Your Anti-Gravity Workspace

First, let's get the Anti-Gravity application running and create our project folder. After launching the app and signing in with your Google account, you'll land in the main interface.

Installation and Onboarding

The initial setup is straightforward. Anti-Gravity will guide you through a brief onboarding to introduce the core concepts. You'll be prompted to select a development style. For this tutorial, we recommend choosing "Agent-Assisted Development." This mode empowers the agent to make decisions and execute commands on its own for simple tasks, while still prompting you for approval on more complex or sensitive operations. It strikes the perfect balance between automation and control.

Creating Your "flight-tracker" Project Folder

In the left sidebar, which serves as your workspace navigator, click to add a new workspace. Choose the "Open Folder" option to work on your local machine. Create a new, empty folder named flight-tracker and open it. This will be the root of our project.

Step 1: The Initial Prompt - Scaffolding the App with an Agent

With our empty folder open, it's time to give our first instruction. In the Agent Manager, start a new conversation and provide a detailed, clear prompt that describes the initial version of our application.

Writing an Effective Initial Prompt

A good prompt is specific and outlines both the functionality and the technology stack. Here's the prompt we'll use:

Build me a flight lookup Next.js web app where the user can put in a flight number and the app gives you the start time, end time, time zones, start location, and end location of the flight. For now, use a mock API that returns a list of matching flights. Display the search results under the form input.

Once you send this prompt, the agent springs into action. It will correctly infer that the first step is to scaffold a new Next.js project and will run npx create-next-app on your behalf.

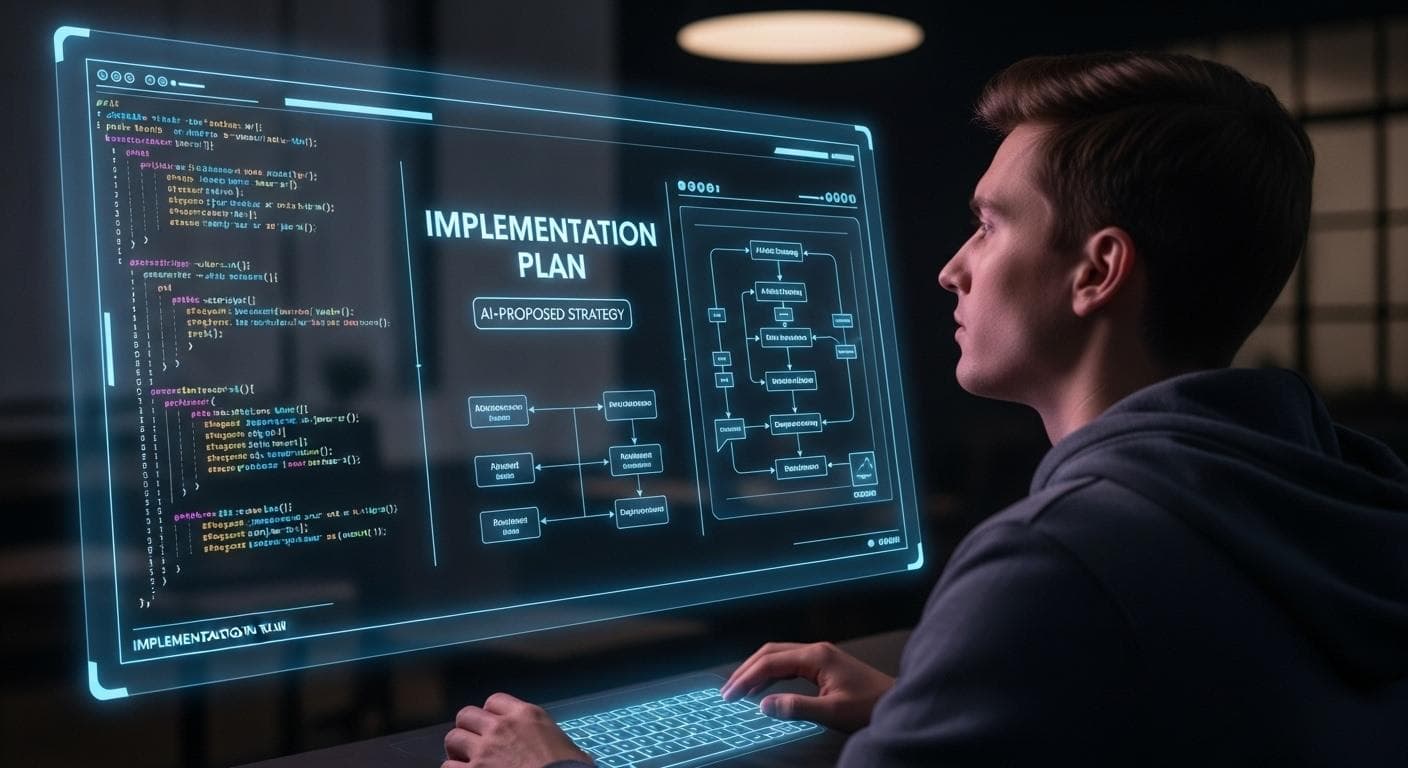

Understanding Agent Artifacts: Task Lists & Implementation Plans

As the agent works, it generates several "artifacts" that provide transparency into its process. You can view these in the right sidebar. Initially, you'll see a Task List, which is the agent's internal to-do list. This is a fantastic way to monitor its progress in real-time.

After some initial work, the agent will produce its first major artifact for your review: an Implementation Plan. This is a critical checkpoint. It's a markdown document where the agent outlines its strategy: the components it will create, the data structures it will use, and how it plans to verify its work. Approving this plan is what gives the agent permission to write code.

Best Practices for Reviewing Implementation Plans

Treat the Implementation Plan as you would a code review from a junior developer. Your careful review is key to ensuring a high-quality outcome. Here's what to look for:

- Correctness & Logic: Does the plan accurately interpret your prompt? Does the proposed logic correctly solve the core problem?

- Architecture & File Structure: Does the plan adhere to the conventions of your chosen framework (e.g., Next.js App Router)? Is it creating files in logical locations?

- Dependencies: Is the agent proposing to add new libraries? Are they well-maintained, secure, and appropriate for the task?

- Security: Is the agent handling sensitive information, like API keys, correctly? It should be using environment variables (

.env.local), not hardcoding secrets. - Scope: Is the plan too ambitious or too narrow? You can guide the agent by commenting, "Just focus on the API route for now; we'll handle the UI later."

Step 2: From Mock Data to Live API - Agent-Led Research & Implementation

Our initial app works with mock data, but a real flight tracker needs a live API. We will now task an agent to handle the research and initial implementation for us.

Tasking an Agent to Research the Aviation Stack API

In a new conversation, we can give the agent a research-oriented task. Notice how we can provide it with context, like an API key, to improve the quality of its work.

Look up the Aviation Stack API. I already have an API key [Your_API_Key_Here] if you want to test with curls. Look up the documentation and make sure you use the curl responses to get the interface.

The agent will now perform a Google search, find the official documentation, read it, and use the provided API key to make actual curl requests. This allows it to understand the exact structure of the API response.

Reviewing the Research and Approving the Plan

The result of this research is another Implementation Plan. This one will be rich with detail, including the API endpoint, sample data from the curl commands, and a proposed TypeScript interface for the data. This is where the human-in-the-loop model shines. We can review the plan and even leave comments, just like in a Google Doc.

Let's add two comments to guide the agent:

- On the mention of the API key: "Use the key I gave you in .env.local."

- At the end of the plan: "Implement this in a util folder so that I can apply it to the route. Don't change the route yet."

After submitting our feedback, the agent incorporates it and creates a new utility file (utils/aviationStack.ts) containing the logic to fetch data from the live API, all without us writing a single line of code.

Step 3: Taking the Controls - Integrating the API in the Editor

Now that the agent has created the API utility, it's time for us to step in. While the agent could certainly proceed with the integration, this presents a perfect opportunity to explore how human-AI collaboration works for fine-grained control. We'll step in and use the AI-powered editor to see how Anti-Gravity assists when you decide to take the keyboard.

When to Intervene: Moving from Agent to Editor

You can open the project in the editor at any time by pressing Command + E or clicking the "Open in Editor" button. We'll navigate to our main route file (app/page.tsx). Here, we can see the original code that uses the mock flight data.

Leveraging AI Autocomplete to Refactor the UI

We'll delete the mock data logic. Immediately, the editor's AI, aware of the new aviationStack.ts file and the overall context of our project, will suggest the code needed to call our new function. Simply pressing Tab accepts the suggestion, including the necessary import statement.

The next step is to update our UI components to use the new data types from the live API instead of the mock schema. As you start typing, the context-aware autocomplete will make this process incredibly fast. Change Flight to AviationStackFlightData, and then let the AI guide you to update all the property names (flight.departure.airport instead of flight.startLocation, etc.). This entire refactoring process, which could be tedious and error-prone, takes mere seconds.

Agent-Led Testing: Watching the AI Verify its Own Code

Before we even manually test, let's look back at our first agent. After scaffolding the app, it automatically launched the browser and tested the form by typing in a sample flight number. It moved the cursor, clicked the button, and verified that results appeared. The agent then generated a Walkthrough artifact, complete with screen recordings of its test, to prove its work was successful. This automated verification loop is a core strength of Anti-Gravity.

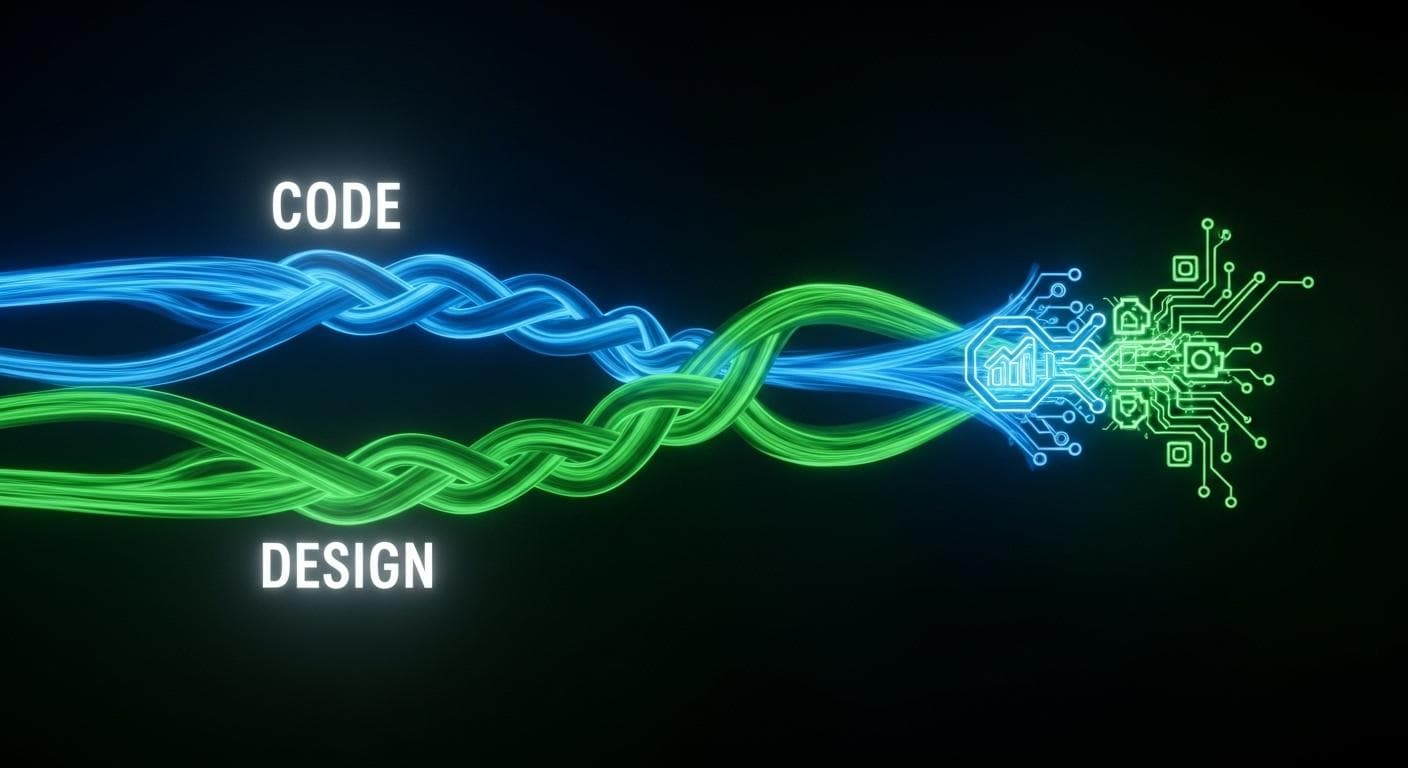

Step 4: Parallel Processing - Designing a Logo While Coding

One of the most revolutionary features of Anti-Gravity is the ability to run multiple agents concurrently. While our first agent was working on the core application and our second was researching the API, we can spin up a third for a completely different task: design.

How Anti-Gravity Handles Multiple Concurrent Agents

The Agent Manager acts as your central hub, allowing you to monitor the progress of all active agents across different tasks. This multi-threaded workflow allows you to delegate various aspects of a project simultaneously, dramatically accelerating development.

Prompting for Logo Design and Implementing the Favicon

Let's give a new agent a creative prompt. Anti-Gravity has direct access to Google's latest image generation models.

Design a few different mockups for a logo for our app. I want one that's more minimalist, one that's more classic, one that's clearly a calendar, and any others that you think might fit. I want to use this as the favicon for our app.

In moments, the agent will return with several logo options. We'll choose our favorite (the classic aviation theme) and give it a follow-up command: "I like the global flight tracker with aviation theme. Add this as my favicon. Also, update the site title to 'Global Flight Tracker'."

The agent will then take the chosen image, place it in the correct public folder, and update the HTML layout to include it as the favicon and set the new title. This entire creative and implementation cycle happens in the background while we focus on the core application logic.

Step 5: The Final Feature - Integrating Google Calendar

Our app is functional and looks good, but let's add one final polished feature: the ability to add a flight to Google Calendar with a single click. We can delegate this entire feature implementation to an agent.

Prompting the Final Feature Request

Our prompt needs to be clear about the desired user interaction and the necessary data.

For each flight result, make the entire card clickable to open a Google calendar link with the flight information, notably the times and locations. Test this with the browser and show me a walkthrough when you are done.

Reviewing the Final "Walkthrough" Artifact

We can watch the agent work in real-time. It will analyze the existing code, identify the flight result component, and implement the logic to construct the correct Google Calendar URL with all the flight details as parameters.

Once the code is written, the agent launches the browser. We can see its blue cursor navigate to the app, enter a flight number, and then click on a result card. A new tab opens with a pre-filled Google Calendar event, confirming the feature works perfectly. The agent then compiles this into a final Walkthrough artifact, giving us definitive proof that the task is complete and correct.

Step 6: From Code to Commit - Finalizing Your Project

With all features implemented, the last step is to commit our work. Anti-Gravity assists here as well.

Using AI to Generate a Context-Aware Commit Message

Inside the editor's source control panel, there's a button to generate a commit message. This isn't a simple template. The AI analyzes all the code changes, as well as the conversation history with all the agents. It understands the intent behind the work. The result is a comprehensive, well-structured commit message that accurately summarizes everything from the initial scaffolding to the API integration and calendar feature.

We'll review the message, click "Commit," and our work is done. We've built a complete, data-driven web application from a blank folder in a fraction of the time it would traditionally take.

Anti-Gravity vs. The Old Way: Why This is a Game-Changer

The true impact of agent-assisted development becomes clear when you compare it to a traditional workflow. The developer's role shifts from a line-by-line implementer to a high-level architect and reviewer.

| The Traditional Workflow | The Anti-Gravity Workflow |

|---|---|

| Manually scaffold project with CLI, configure boilerplate. | Delegate scaffolding to an agent with a single prompt. |

| Multiple browser tabs open for API documentation, Stack Overflow, and tutorials. | Task an agent to research the API; it returns a summarized plan with verified data structures. |

| Write boilerplate code for data fetching, state management, and type definitions. | Agent writes the utility code; developer uses AI autocomplete to quickly integrate it. |

| Constantly switch between editor, browser, and terminal to test changes. | Agent autonomously tests its own code in a browser and provides a video walkthrough. |

| Creative tasks like logo design are outsourced or done in a separate application, blocking development. | Delegate design tasks to a parallel agent without leaving the development environment. |

Troubleshooting 101: When Agents Go Astray

While incredibly powerful, AI agents are not infallible. Part of mastering agent-assisted development is learning how to guide and correct them. Here are a few common scenarios and how to handle them:

Correcting Course: The Art of Iterative Prompting

Sometimes an agent will misinterpret a prompt or head in a direction you didn't intend. Don't delete the conversation and start over. Instead, treat it like a real conversation.

- Be Specific: If an agent's plan is too vague, provide a clarifying follow-up. For example: "That's a good start, but for the API call, use the

fetchAPI directly instead of a library like Axios." - Use Comments: The ability to comment on an Implementation Plan is your most precise tool. Highlight the exact section you disagree with and provide direct instructions.

- Provide Examples: If you want code structured in a specific way, give the agent a small example in your prompt. "Please structure the component like this:

const MyComponent = () => { ... }"

Handling Hallucinations and Incorrect Assumptions

An agent might occasionally "hallucinate" a function name, a file path, or an API field that doesn't exist. This usually happens when its context is incomplete. The solution is to provide more context.

Bad Prompt: "Refactor the user logic." Good Prompt: "Read the contents of

src/utils/user-auth.ts. Then, refactor thegetUserProfilefunction to also return the user's avatar URL from theprofileobject."

By directing the agent to read the relevant file first, you anchor its understanding in the reality of your codebase, dramatically reducing errors.

Integrating Anti-Gravity with Your Existing Projects

You don't need to start from a blank slate to benefit from Anti-Gravity. Integrating it into an existing project is straightforward and unlocks massive potential for refactoring and feature addition. The key is to properly "onboard" the agent to your codebase.

When you open your existing project folder, start with an introductory prompt:

"I want you to familiarize yourself with this codebase. Start by reading the

README.mdand thepackage.jsonto understand the project's purpose and dependencies. Then, list the main directories inside the/srcfolder and give me a one-sentence summary of what you think each one is for. Finally, tell me what framework you believe this project is built on."

This initial prompt forces the agent to build a mental model of your project's architecture. Once it has this context, you can ask it to perform complex tasks-like adding a new API endpoint or refactoring a legacy component-with a much higher degree of success.

Conclusion and Next Steps

Through this tutorial, you've not only built a flight tracker but have experienced a fundamentally new way to create software. You've learned to prompt and guide AI agents, review their plans, intervene when necessary in a powerful editor, and leverage parallel automation to accomplish multiple tasks at once. This is the future of development: a collaborative partnership between human creativity and artificial intelligence.

This is just the beginning. The potential for agent-assisted development is limitless, promising a future where building complex applications becomes faster, more efficient, and more creative than ever before. Welcome to the new paradigm.

What kind of application would you build first with an AI development partner like Anti-Gravity? Share your ideas in the comments below!

Comments

We load comments on demand to keep the page fast.