If you're still using AI exclusively through a web browser, you're operating with a hand tied behind your back. The endless cycle of lost context, scattered notes across multiple chat windows, and the constant copy-pasting is not just inefficient; it's actively holding you back. There is a fundamentally better way, a power-user workflow that the big AI companies rarely talk about, and it all happens in your terminal.

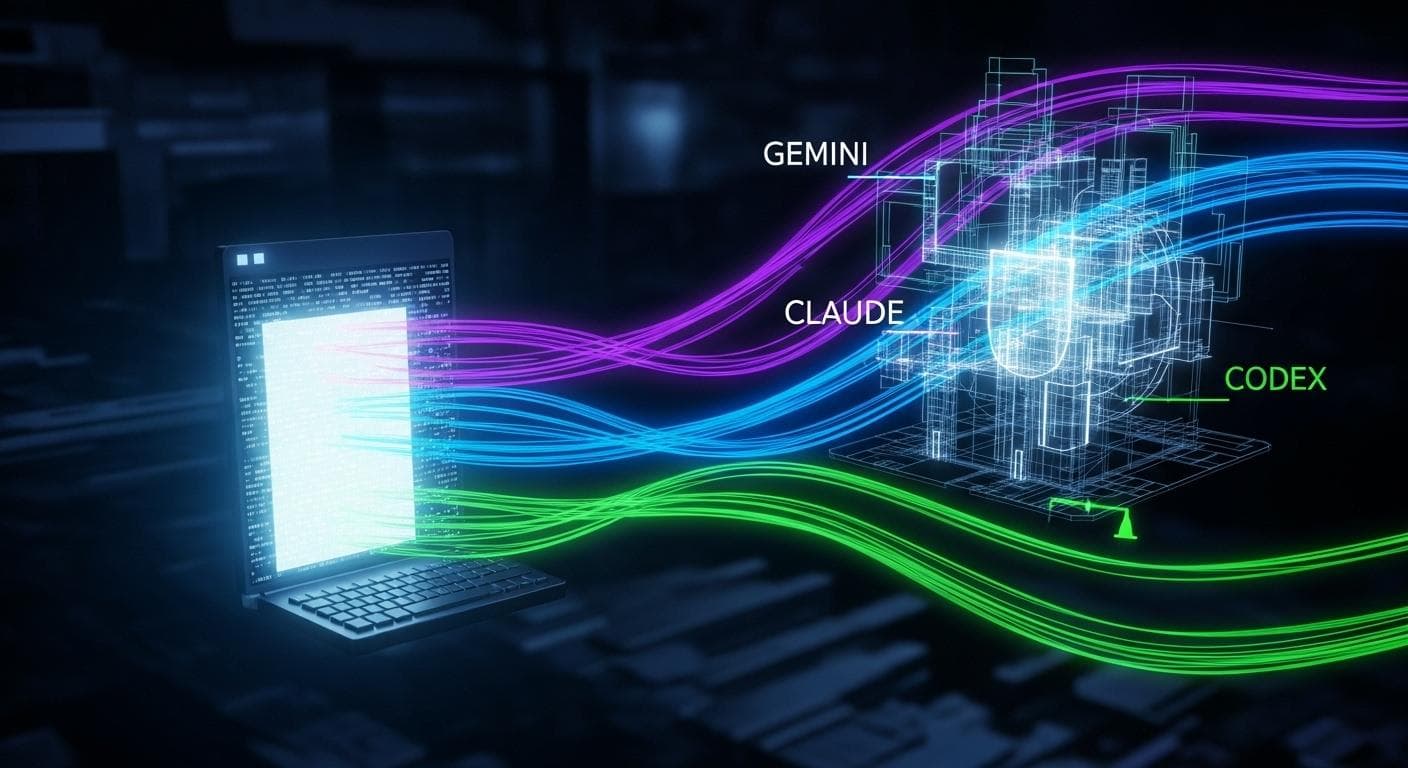

This isn't just about using a single command-line interface (CLI) tool. This is a complete strategic shift. We're talking about a multi-AI strategy: using specialized CLI clients for Gemini, Claude, and OpenAI models together, in the same project, sharing a unified local context. Once you experience this level of control and speed, you will never go back to the browser.

The Browser Is Broken: Why Your Current AI Workflow Fails

Let's be honest, does this sound familiar? You start a research project in ChatGPT. After a dozen prompts, the scroll bar is a tiny speck, and the AI has forgotten the initial instructions. To double-check its facts, you open new tabs for Claude and Gemini, creating parallel, disconnected conversations. You try to consolidate key findings in a notes app, but it quickly becomes an unmanageable mess.

This workflow is flawed for three critical reasons:

- Scattered Context: Your project's "brain" is fragmented across dozens of ephemeral browser chats. There is no single source of truth, forcing you to constantly re-explain your goals to the AI.

- Vendor Lock-In: Your entire project history, your prompts, and the AI's outputs are trapped inside a proprietary platform. Moving to a new, better model means starting from scratch.

- Lack of Control: You cannot easily integrate the AI with your local files, scripts, or development environment. Every interaction is manual and mediated by the copy-paste command.

The Terminal Is Your Superpower: The Core Principles

Moving your AI workflow to the terminal solves these problems by establishing a new set of powerful principles.

Principle 1: Local-First Project Management Your project is no longer a collection of browser tabs; it's a folder on your hard drive. Every piece of research, every draft, and every decision is a local file. This gives you ownership and portability. You can use version control like Git, back it up anywhere, and work completely offline if you're using local models.

Principle 2: Shared Context via Markdown Files

This is the secret sauce. CLI tools can generate and read a local context file (e.g., GEMINI.md, claude.md). This file acts as a persistent memory or a project charter. It summarizes the project's goals, key decisions, and file structure. Every time you start a new session in that directory, the AI instantly knows what you're working on. No more re-explaining.

Principle 3: Role Specialization for Each AI Model Different AI models excel at different tasks. Instead of trying to find one model that does everything, the terminal allows you to easily delegate. You can use Gemini for its powerful web research capabilities, Claude for its nuanced and creative writing, and an OpenAI model like Codex for code generation or high-level analysis-all operating on the same set of local files.

The Toolkit: Installing Your AI Terminal Clients

Getting started is straightforward. You'll need Node.js and npm installed on your system. Here are the commands to install the essential tools.

Setting Up Google's gemini-cli

Gemini's CLI is excellent for getting started due to its generous free tier. It provides access to the powerful Gemini 1.5 Pro model. Open your terminal and run:

npm install -g @google/gemini-cli

gemini to log in with your Google account and begin.

Setting Up Anthropic's claude-code

claude-code is arguably the most powerful tool in this stack, especially for its 'Agents' feature. It requires a Claude Pro subscription ($20/month), but it allows you to use your subscription in the terminal without paying for API keys. Run this command to install:

npm install -g @anthropic-ai/claude-code

claude to authenticate your account.

Setting Up OpenAI's openai CLI

OpenAI now provides an official, open-source command-line tool. It's a powerful client that supports features like multimodal inputs. To install it, run:

npm install -g openai

openai CLI can also be configured to use a local context file (often named agents.md by convention) to stay in sync with your project.

Practical Playbook: A Step-by-Step Project Walkthrough

Let's put this all together with a hypothetical project: creating a blog post about the best home coffee brewing methods.

Step 1: Initialize the Project and Context Files

Create a new directory (mkdir coffee-project) and cd into it. Launch gemini. It won't create a context file for you, so you'll make one yourself: touch GEMINI.md. Add a few lines describing your project. Now, every time you launch gemini in this folder, it will automatically load GEMINI.md as its context.

Step 2: Assign Roles - Gemini the Researcher In your Gemini terminal, assign the first task:

"Research the top 10 most cited articles on pour-over coffee methods. Summarize the key steps, required equipment, and common mistakes into a file named 'research-summary.md'."

Gemini will perform the search and, with your permission, write the file directly to your project folder.

Step 3: Claude the Creative Writer

Now, open a new terminal window in the same directory and launch claude. Run the command /init. This will scan the folder (including the file Gemini just made) and create a claude.md file. Give Claude its role:

"Using the 'research-summary.md' file as a guide, write a 500-word engaging introduction for a blog post titled 'Mastering the Art of Pour-Over'. Write it to 'intro-draft.md'."

Step 4: Codex the Critic and Analyst

Open a third terminal for the openai CLI. After initializing its context file, give it a high-level analytical task:

"Review 'intro-draft.md'. Identify any weaknesses in the hook and suggest three alternative opening sentences to increase reader engagement."

You now have three specialized AIs collaborating on a single project, seamlessly sharing work through local files.

Step 5: Manually Syncing Context

At the end of a work session, the most crucial step is to consolidate the context. This is a manual but vital process. Instruct one of the AIs (Claude is particularly good at this) to create a comprehensive session summary. Then, manually copy and paste this summary into the top of your GEMINI.md, claude.md, and agents.md files. This ensures that the next time you open any of the tools, they are all fully aware of the latest progress.

Overcoming Common Hurdles

Transitioning to the terminal comes with a small learning curve, but the payoff is immense. Here are some common issues and how to solve them:

- Permission Errors: If you get an `EACCES` error during installation with `npm`, you may need to run the command with administrator privileges. On macOS and Linux, this means prefixing the command with `sudo`, like `sudo npm install -g ...`.

- Command Not Found: If you install a tool but your terminal says the command doesn't exist, it's likely a `PATH` issue. This means your terminal doesn't know where to look for the installed program. A quick search for "add npm global to PATH" for your specific operating system will provide a solution.

- The Intimidation Factor: Don't let the blinking cursor scare you. You only need to know a handful of commands (`cd` to change directory, `ls` or `dir` to list files, and `mkdir` to create a directory) to be incredibly effective.

Level Up: Agents and Open-Source Managers

The real power of this workflow emerges when you master advanced features.

The Power of Agents in claude-code

claude-code allows you to create 'agents'-specialized instances of Claude with their own instructions and a fresh context window. Imagine you're deep in a writing session, but you need a quick technical review. Instead of cluttering your main chat, you can delegate:

"@brutal-critic-agent, please review the last 500 words for technical accuracy."

Claude spawns a new, temporary instance to perform that task, preserving the context of your primary conversation.

Unifying Your Workflow with open-code

The ecosystem is evolving rapidly. Open-source tools like open-code are taking this strategy to the next level. open-code provides a unified terminal interface that can connect to various models, including Claude (using your Pro subscription), Grok, and-most importantly-local models running via tools like Ollama. This is the ultimate endgame: a single, model-agnostic interface that puts you in 100% control of your tools and your data.

Why This Is the Future of AI-Assisted Work

Breaking free from the browser isn't about being contrarian; it's about reclaiming ownership and building a future-proof system. When your context, files, and project history reside locally, you own them. If a revolutionary new AI model is released tomorrow, you don't have to migrate anything. You simply install its CLI tool, point it to your project directory, and it can pick up right where the others left off.

This is more than a productivity hack; it's a paradigm shift. It transforms you from a passive user of a web app into an active conductor of a powerful, multi-faceted AI orchestra. The terminal may seem intimidating, but the power it unlocks is undeniable. The tools are accessible, with free tiers (gemini-cli) and powerful open-source options (open-code) available to get you started today.

What is the biggest frustration you have with your current AI workflow? Share your thoughts in the comments below - let's discuss how the terminal can solve it.

Comments

We load comments on demand to keep the page fast.